The Cyber Resilience Act (CRA) introduces a strict CRA exploited vulnerability reporting 24 hours deadline. This isn’t just guidance; it’s a legal obligation under Article 11 that transforms product security into a race against the clock the moment you learn a flaw is being actively exploited.

Decoding The CRA’s 24-Hour Reporting Mandate

The Cyber Resilience Act fundamentally rewrites the rules for manufacturers of products with digital elements sold in the EU. The era of discretionary vulnerability disclosure is over. In its place, the CRA imposes a legally binding framework prioritising speed and transparency, especially when a vulnerability is being used by attackers in the wild.

At the heart of this new reality is the 24-hour reporting deadline. This rule applies with laser focus to ‘actively exploited’ vulnerabilities—flaws that have graduated from a theoretical risk to a live attack vector. The second you have reliable evidence of an exploit, the clock starts ticking.

When The Clock Starts Ticking

Figuring out when you are officially “aware” is absolutely critical. This isn’t limited to your internal security team discovering an issue. Awareness can be triggered from several directions:

- Internal Detection: Your own monitoring systems or Security Operations Centre (SOC) spots an intrusion that takes advantage of a product flaw. For instance, your SIEM flags multiple authentication failures followed by a successful, but unauthorised, privilege escalation on your connected medical device’s backend server.

- Public Reports: A credible security researcher or news outlet publishes a proof-of-concept or report showing a vulnerability is being used in attacks. A practical example would be a well-known cybersecurity blog posting evidence that a flaw in your smart lock’s firmware is being used by a botnet.

- Partner Notification: A customer or supply chain partner informs you that their systems were breached through your product. For example, a major retail chain using your smart POS terminals reports that they’ve experienced a data breach traced back to a vulnerability in your device.

This demanding deadline isn’t arbitrary. Its purpose is to stand up a rapid, EU-wide response network. By compelling quick notification to the EU’s cybersecurity agency, ENISA, and the relevant national Computer Security Incident Response Teams (CSIRTs), the CRA aims to contain threats before they escalate into widespread damage. A crucial first step in preparing for this is understanding your breach notification timeline.

The CRA Reporting Timeline In Action

Let’s walk through a scenario. It’s September 11, 2026, and a Spanish manufacturer of smart home devices suddenly detects a critical firmware vulnerability. Worse, it’s being actively exploited by cybercriminals to gain unauthorised access to user data across thousands of units in the EU.

Under the CRA, they have just 24 hours to report this to their national CSIRT and to ENISA. This isn’t a hypothetical exercise—it becomes a core legal obligation on that exact date, as laid out in Article 11.

The initial 24-hour alert is just the starting pistol. The CRA mandates a multi-stage reporting process designed to give authorities progressively more detail as your own investigation develops.

To help you visualise the cadence, here’s a quick breakdown of what authorities expect and when.

CRA Reporting Timeline at a Glance

This table summarises the mandatory reporting deadlines for actively exploited vulnerabilities under Article 11. It’s designed to give you a clear, at-a-glance view of your obligations once the clock starts.

| Deadline | Required Action | Key Information to Include |

|---|---|---|

| Within 24 hours | Early Warning | Initial alert about the actively exploited vulnerability. Name of manufacturer, product affected, nature of the vulnerability. |

| Within 72 hours | Vulnerability Notification | A more detailed update with severity assessment (e.g., CVSS score), potential impact, and any available mitigation advice. |

| Within 14 days | Final Report | Comprehensive analysis including root cause, full mitigation details, and steps taken to prevent recurrence. |

This multi-phase approach acknowledges a core reality of incident response: you rarely have all the answers on day one. It ensures authorities get immediate, actionable information, followed by deeper context as it becomes available.

For a deeper dive into which of your products fall under these rules, check out our guide on Cyber Resilience Act applicability. Building the processes to meet this timeline is no longer a “nice-to-have”—it’s a foundational requirement for EU market access.

Your First-Hour Playbook for Detection and Triage

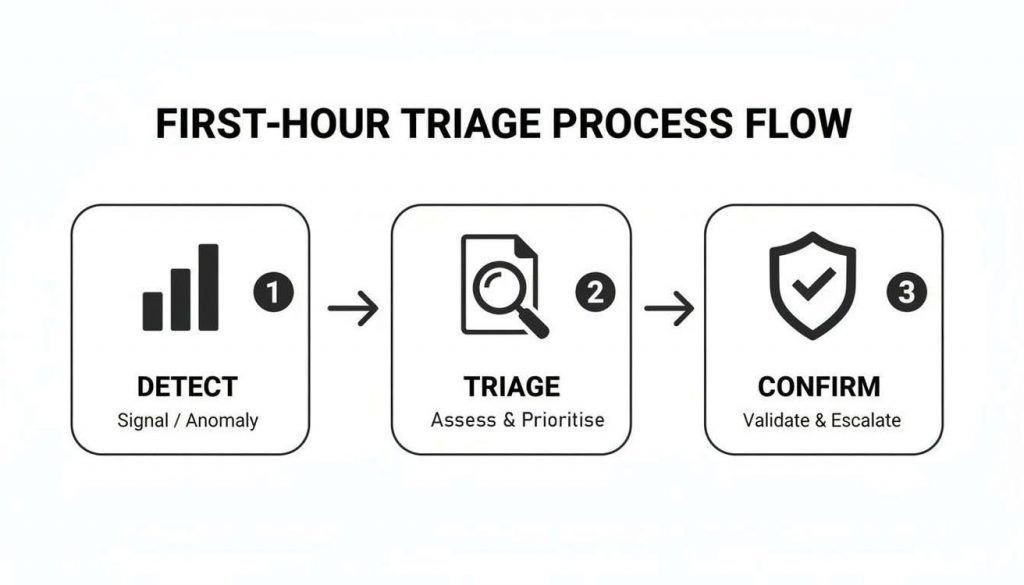

Your ability to meet the CRA’s tight 24-hour reporting deadline for an exploited vulnerability comes down to what happens in the first sixty minutes. That first hour isn’t for planning or debate; it’s for pure execution. Only a well-rehearsed playbook can get you from initial detection to a defensible reporting decision within that critical timeframe.

It all starts with clear internal triggers. These aren’t just vague alerts but specific, pre-defined events that kick off your “CRA Incident Clock” automatically. Leaving it to guesswork is a sure-fire way to miss the deadline and risk non-compliance.

Establishing Your Incident Triggers

You need a mix of automated and manual signals that immediately escalate a potential security event. Think of them as tripwires for your rapid response team. The goal is to shrink the time from signal to awareness down to mere minutes.

Here are a few practical examples of what these triggers look like in the real world:

- Anomalous API Calls: Imagine your monitoring system flags a sudden, sustained spike in failed login attempts on your connected thermostat’s firmware. A pre-set rule could automatically treat any jump over a 50% increase within a 10-minute window as a critical incident.

- Threat Intelligence Correlation: A feed like CISA’s Known Exploited Vulnerabilities (KEV) catalogue adds a new vulnerability. An automated script should instantly check this against your Software Bill of Materials (SBOM) to see if a library in your product is affected. A match instantly creates a high-priority ticket for your security team.

- High-Fidelity Alerts: You get an alert from your Intrusion Detection System (IDS). But this isn’t just a potential probe; the system confirms a successful exploit by detecting command-and-control (C2) traffic coming from one of your deployed IoT sensors. This type of alert would be pre-classified as an automatic trigger for your CRA response plan.

The moment a trigger is hit, your rapid response team needs to assemble. This shouldn’t be a last-minute scramble to figure out who to call. Roles must be pre-assigned so everyone knows exactly what to do the second an alert fires.

Confirming an Active Exploit

The single most important call you’ll make in this first hour is confirming whether a vulnerability is truly “actively exploited.” The CRA is specific here: you need reliable evidence of malicious use in the wild. A theoretical proof-of-concept isn’t enough to start the clock.

This is where your team must connect the dots between different data sources to build a solid, evidence-based case.

Let’s say a security researcher publishes a blog post detailing a new remote code execution (RCE) vulnerability in a popular open-source library used in your smart factory controllers. Your clock doesn’t start quite yet. But your team immediately goes on the hunt, correlating the public report with telemetry data from customer sites. They quickly find anomalous outbound network connections from several controllers to an IP address known to be used by a specific threat actor.

This is your moment of confirmation. A public vulnerability has just been linked to real-world, unauthorised activity impacting your products. Your 24-hour reporting clock has officially begun, and your internal documentation must capture this precise decision point.

To properly triage the severity, most teams rely on frameworks like the Common Vulnerability Scoring System (CVSS). It provides a standardised, numerical score based on factors like exploitability and impact, which is invaluable for prioritisation.

For example, a vulnerability that is easy to exploit over the network and gives an attacker complete control of a device would receive a high CVSS score (e.g., 9.8 Critical), making it an immediate priority. This score gives you a clear rating of severity, helping you prioritise your response and accurately describe the vulnerability to the authorities. For a deeper dive into setting up the necessary systems for this level of visibility, our guide on CRA logging and monitoring requirements provides essential guidance.

Ultimately, your first-hour playbook must be a repeatable, evidence-driven process. The objective is to move from the initial signal to a confirmed exploitation with a clear audit trail. This ensures your CRA exploited vulnerability reporting 24 hours notification is not only on time but also accurate and able to stand up to regulatory scrutiny.

Crafting Your Initial 24-Hour ENISA Notification

The first report you fire off to ENISA and the national CSIRT is a big deal. It sets the tone for the whole incident. Meeting the CRA’s 24-hour reporting deadline for an exploited vulnerability comes down to having a clear, fast, and precise process. This first notification isn’t a full technical deep-dive; think of it as a critical early warning.

Your job here is to give the authorities just enough information to grasp the situation. You’re reporting the “what,” not the “how.” The last thing you want is to give away technical details that could help other threat actors.

This workflow is what you should be aiming for in that first critical hour, moving from a raw signal to a confident decision to report.

It really is that straightforward. You detect an anomaly, triage its importance, and confirm it’s an active exploit. This is the core loop that feeds directly into your notification process.

What to Include in Your First Report

Keep your 24-hour report short and factual. Stick to the absolute essentials required under Article 11. Now is not the time to overshare sensitive technical details; doing so just creates more risk.

Here’s what you absolutely must include:

- Manufacturer Identification: Your company’s legal name and contact information.

- Product Identification: The specific product name, model, and the version(s) affected. Be precise. “SmartLock Pro v2.1 firmware” is good; “our smart locks” is not.

- Nature of the Vulnerability: A high-level description of the flaw itself. Focus on the impact, not the exploit mechanics.

For example, instead of getting into the weeds about a specific buffer overflow, you’d simply state: “A remote code execution (RCE) vulnerability allowing an unauthenticated attacker to take control of the device.” That gives authorities all the context they need without handing attackers a blueprint.

The Right Channel for Reporting

The CRA wants to avoid a communication mess, so it mandates a single, centralised system. All your reports must go through the ENISA-managed Single Reporting Platform (SRP). From there, the platform will route your notification to the right national CSIRT coordinator, based on where your company has its main establishment in the EU.

The SRP is meant to be the single source of truth for your reporting. Getting your team familiar with its interface and requirements before an incident is non-negotiable. Don’t wait for a crisis to log in for the first time.

While the process sounds simple, trying to navigate a new portal when the clock is ticking is a recipe for disaster. This is exactly where a good compliance platform comes in. Tools like Regulus can pre-populate reports with verified product and manufacturer details, saving you precious minutes and cutting down the chance of human error under pressure.

An Example of a Clear Notification

Let’s say you manufacture a line of connected security cameras and your team just confirmed an active exploit. Here’s what the core information for your initial ENISA report could look like:

| Field | Example Entry |

|---|---|

| Manufacturer | SecureView Solutions S.L. |

| Product & Version | SecureView Cam 4K, Firmware v1.3.5 |

| Nature of Vulnerability | An authentication bypass vulnerability allowing unauthorised access to the live video stream. |

| Mitigation Status | We are developing a patch. No immediate user-side mitigation is available at this time. |

This format is clean, simple, and delivers exactly what’s needed. It says who you are, what product is at risk, and what the threat is in plain language. Notice there’s no deep technical jargon or speculation about the attacker. This level of precision is perfect for your initial report. You can find more on the full scope of your duties in our overview of CRA reporting obligations under Article 14.

Your first notification is just the opening move in a longer conversation with regulators. By keeping it focused, factual, and timely, you project control and build a solid foundation for effective coordination in the days that follow.

Coordinating With CSIRTs and ENISA After Reporting

Hitting ‘submit’ on your initial report is just the beginning. The real work in handling a CRA exploited vulnerability starts with the follow-up dialogue. Effectively managing this coordination with national Computer Security Incident Response Teams (CSIRTs) and ENISA is what separates a smooth process from a compliance headache.

Think of your initial 24-hour notification as the cover page. The subsequent 72-hour and 14-day updates are the chapters that build out the full story, each adding critical detail. Your designated national CSIRT becomes your primary point of contact and coordination hub for this entire process.

Understanding Agency Roles and Expectations

Once your report hits the Single Reporting Platform (SRP), it’s routed directly to your national CSIRT. Their job is to analyse the threat on the ground, coordinate with other EU member states if the impact is wider, and assess the risk to the entire EU ecosystem. ENISA operates at a higher level, aggregating data from all over the Union to spot systemic risks and attack trends.

What they need from you is proactive, transparent communication. Don’t wait for them to chase you. Be ready for their follow-up questions, because they will come. For example, after your 24-hour report, a CSIRT might immediately ask for specific indicators of compromise (IoCs) you’ve found—like malicious file hashes or attacker IP addresses—so they can warn other organizations. Having your technical and legal points of contact on standby is non-negotiable.

Managing Follow-Up Communications

The CRA itself sets the communication tempo. Your 72-hour notification must build on the initial alert, delivering a severity assessment and concrete details on any immediate mitigations you’ve put in place.

The 14-day final report is where you close the loop. This isn’t a quick summary; it must be a comprehensive breakdown of the root cause, the final patch or corrective action, and the steps you’ve taken to prevent it from happening again. The only way to produce this under pressure is to keep a meticulous incident log from the moment you detect the issue.

Here’s how this coordination plays out in the real world:

- A manufacturer of industrial control systems (ICS) in Spain finds an exploited vulnerability in its PLCs. They file their 24-hour report with Spain’s national CSIRT, INCIBE, through the SRP.

- INCIBE immediately acknowledges the report and asks for any attacker IP addresses the manufacturer has observed. They then use this to warn critical infrastructure operators across the country.

- By the 72-hour mark, the manufacturer provides a detailed update, including a CVSS score of 9.8 and temporary firewall rules customers can implement to block the attack.

- Over the next week, the manufacturer and INCIBE are in regular contact. The company provides status updates on its patch development, demonstrating clear due diligence.

- At the 14-day deadline, they submit the final report, which details the firmware patch and confirms how it has been deployed to affected customers.

This collaborative approach turns a compliance burden into a strategic partnership. Your CSIRT isn’t just an auditor; they are a powerful ally in containing the threat and protecting the broader digital ecosystem.

The numbers show just how urgent this is. In 2025, Spain’s INCIBE logged 22,400 vulnerabilities in digital products. A staggering 41% were actively exploited within just one week of discovery, contributing to attacks that cost Spanish businesses €1.8 billion. The CRA’s reporting structure is designed to break this cycle, but it hinges on tight collaboration between manufacturers and authorities. You can learn more about the policy driving these changes in research from the Center for Cybersecurity Policy.

Exceptions for Delaying Public Disclosure

The CRA draws a sharp line between reporting to authorities and disclosing to the public. You must always meet the 24-hour reporting deadline for your CSIRT and ENISA. There are no exceptions.

However, the regulation provides a very narrow window for delaying the public announcement of a vulnerability. You might be permitted to delay telling the world if:

- A patch is imminent: For instance, if your patch will be ready for deployment in 12 hours, your CSIRT may agree to a coordinated disclosure where the patch and the public advisory are released simultaneously. This prevents giving attackers a head start.

- An active law enforcement investigation is underway: A national authority might ask you to hold off on a public notice to avoid tipping off attackers they are actively tracking.

These exceptions are rare and must be coordinated directly with your CSIRT. You cannot make this call on your own. The default position is always transparency. A well-defined process is essential, and our guide on CRA vulnerability handling can help you build that operational framework.

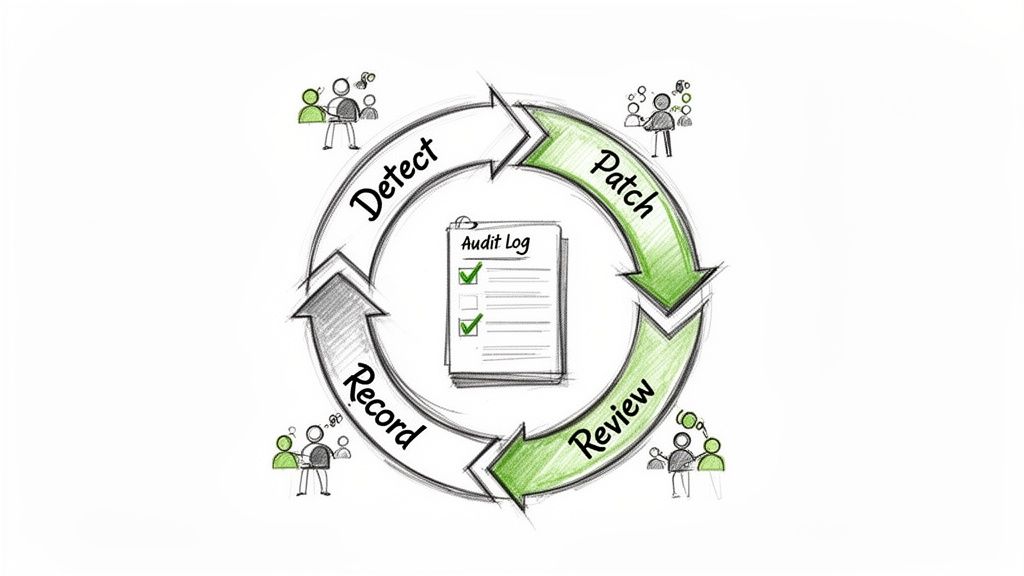

Meeting the CRA’s 24-hour exploited vulnerability reporting deadline is the immediate fire-fight. But true cyber resilience is built long before an incident happens and is refined long after it’s closed. Compliance doesn’t end with the final report to ENISA. It simply marks the transition into a continuous cycle of monitoring, learning, and improvement.

This is what the CRA’s post-market surveillance obligations are all about.

Treating compliance as an ongoing process transforms a legal burden into a strategic advantage. It demonstrates a level of security maturity that partners and customers notice, proving your commitment goes beyond just ticking a box. This is precisely the kind of proactive security culture the Cyber Resilience Act was designed to foster.

Your Audit Trail Is Your Lifeline

Once an incident is resolved, your focus must immediately pivot to documentation. Market surveillance authorities can and will audit your processes, and a comprehensive audit trail is your single most important line of defence. Annex II of the CRA is unambiguous: you must maintain detailed records to prove your diligence, from the first alert to the final patch.

Think of it as building a complete case file for every single vulnerability. This isn’t about just archiving a few emails; it’s about creating structured, chronological evidence that tells a clear and defensible story of your response.

To make this concrete, imagine a vulnerability was found in your smart thermostat firmware. Your audit trail should meticulously capture:

- Initial Detection: Timestamped logs from your security tools showing the anomalous traffic that first triggered the alert. For example:

[2026-10-01 14:32:15 UTC] SIEM Alert ID 98765: Anomalous outbound connection from thermostat_ID_123 to known C2 server 198.51.100.55. - Triage Notes: A record of your team’s decision-making, including the CVSS score assigned (e.g., 9.8 Critical) and the specific evidence used to confirm active exploitation.

- Reporting Records: Copies of the 24-hour, 72-hour, and 14-day reports submitted to the authorities via the ENISA platform.

- Coordination Logs: All correspondence with the national CSIRT, including their requests for information and your team’s responses.

- Patch Development: Code commits in your Git repository tagged with the vulnerability ID, peer review notes, and QA test results related to the security patch.

- Customer Communication: A copy of the security advisory you published and records showing how it was distributed to affected users.

A robust audit trail does more than just satisfy regulators. It becomes an invaluable internal resource for post-mortems, helping you pinpoint process gaps and strengthen your response capabilities for the next inevitable incident.

To make sure your organisation can consistently meet reporting deadlines and maintain this level of documentation, it’s vital to have a solid corporate compliance program in place. This framework is the foundation upon which your audit trails and response plans are built.

A checklist is the best way to ensure you capture everything needed for a compliance audit. Here are the essential records you should be collecting for every incident.

CRA Incident Record-Keeping Checklist

| Record Category | Evidence to Collect | Purpose |

|---|---|---|

| Detection & Triage | SIEM/IDS alerts, raw logs, vulnerability scan reports, CVSS scoring worksheets, records of team decisions. | Proving the vulnerability was identified and assessed according to a documented process. |

| Official Reporting | Copies of all submitted reports (24-hour, 72-hour, 14-day), submission confirmation receipts from ENISA. | Demonstrating timely and complete reporting to regulatory bodies. |

| Authority Coordination | All emails, meeting notes, and formal communications with national CSIRTs or other market authorities. | Showing cooperation and responsiveness during the incident lifecycle. |

| Remediation & Patching | Code commits, pull requests, test plans, QA results, secure coding review sign-offs for the patch. | Documenting that the vulnerability was effectively and securely remediated. |

| User Communication | Security advisories, email distribution lists, website update records, customer support ticket logs. | Providing evidence that affected users were notified as required. |

| Post-Incident Review | Post-mortem report, meeting minutes, list of corrective actions and assigned owners. | Proving that lessons learned are being systematically integrated back into your processes. |

Maintaining these records diligently for each incident creates a powerful repository of evidence that will make any future audit a smooth, fact-based review rather than a scramble for proof.

Turning Lessons into Action

The most valuable output of any security incident is the lesson it teaches you. A genuine culture of continuous compliance means systematically feeding these hard-won lessons back into your Secure Development Lifecycle (SDLC). This is how you stop making the same mistakes twice.

A formal post-incident review is the best forum for this. Gather the response team and ask direct, honest questions:

- Could we have detected this sooner? If so, how?

- Did our triage process work exactly as intended? Where were the friction points?

- Were there any bottlenecks or delays in our reporting workflow?

- How can we make patching faster and more efficient for this product line?

The answers must lead to concrete, assigned actions. For example, if you discover a specific type of coding error (like an unchecked input field causing a buffer overflow) led to the vulnerability, the immediate action item is to update your static analysis (SAST) tools to automatically flag that pattern in all future code commits. This integrates the lesson directly into your daily development process, making your products inherently more secure from day one.

In the ES region, where over 4,500 IoT vendors operate according to INCIBE’s registry—many targeting smart manufacturing—the CRA’s 24-hour rule, effective from 11 September 2026, is a game-changer. It could prevent disasters like the 2024 Mirai botnet revival that compromised an estimated 2.5 million Spanish devices. The pressure is on, as a recent survey shows 85% of product teams in Spain are worried about managing CRA alongside NIS-2 and DORA.

By embedding these learnings, you create a virtuous cycle. Each incident, while painful, ultimately makes your organisation stronger, more efficient, and better prepared. This proactive stance is the true essence of building a durable, compliance-driven culture that will stand the test of time.

Common Questions About CRA Reporting

Getting to grips with the Cyber Resilience Act’s reporting rules can throw up a lot of questions. Here are some quick, practical answers to the most common queries we hear about the CRA’s 24-hour exploited vulnerability reporting mandate.

What Exactly Triggers the 24-Hour Reporting Clock?

The clock starts ticking the moment a manufacturer becomes ‘aware’ that a vulnerability in their product is being ‘actively exploited’. Both ‘aware’ and ‘actively exploited’ are critical terms that demand careful interpretation on the ground.

‘Awareness’ isn’t just about your internal security team discovering a flaw. It can be triggered by a whole host of external sources. A credible public report, a notification from a security researcher detailing real-world attacks, or even telemetry from your own products showing patterns of compromise—all of these can make you ‘aware’.

‘Actively exploited’ is the other half of the puzzle. This means there is reliable evidence that malicious actors are actually using the vulnerability in real attacks. A theoretical proof-of-concept (PoC) or a private disclosure from a researcher alone does not start the clock.

Here’s a real-world scenario: A security researcher privately discloses a vulnerability in your smart camera’s firmware. At this point, the clock doesn’t start. But a week later, they publish a blog post with proof that a botnet is now using that exact same exploit to hijack devices. Your 24-hour reporting obligation begins the moment your team learns of that public post.

How Does This Reporting Overlap With NIS2 and GDPR?

The CRA’s reporting duty is separate but can absolutely overlap with other major EU regulations like the NIS2 Directive and GDPR. It’s crucial to understand these are distinct legal obligations. A single incident might very well trigger duties under all three at the same time.

- NIS2 Directive: Imagine your product is a networking switch used in a hospital. If an exploited vulnerability in your switch disrupts hospital operations, the hospital (as an ‘essential entity’) has a 24-hour NIS2 reporting duty. Your CRA report will be critical evidence for their own notification.

- GDPR: If the attack on your smart camera product results in a personal data breach (e.g., attackers access and download stored video footage of users), you have a separate 72-hour notification duty to your data protection authority under GDPR.

While the CRA’s proposed Single Reporting Platform aims to simplify some of this by funnelling information to relevant authorities, the legal responsibilities themselves remain separate. Your incident response plan must assess every event against all applicable regulations.

What Are the Penalties for Missing the 24-Hour Deadline?

The financial penalties for non-compliance are substantial. They are explicitly designed to be a powerful deterrent, and market surveillance authorities will not take missed deadlines lightly.

Failing to report an actively exploited vulnerability on time can lead to administrative fines of up to €10 million or 2% of your company’s total worldwide annual turnover from the preceding financial year, whichever figure is higher.

For a practical perspective, if your company has a global turnover of €600 million, a failure to report on time could result in a fine of up to €12 million (2% of turnover), which is higher than the €10 million flat cap.

Other violations under the Cyber Resilience Act can carry even steeper fines, reaching up to €15 million or 2.5% of global turnover. These penalties elevate timely and accurate reporting from a simple IT task to a critical business function.

Do I Report Vulnerabilities Found by Ethical Hackers?

Not automatically, no. The CRA exploited vulnerability reporting 24 hours rule applies specifically and exclusively to vulnerabilities that are ‘actively exploited’. A responsible disclosure from an ethical hacker or a security researcher does not, by itself, meet this criterion.

When a researcher reports a flaw to you through a Coordinated Vulnerability Disclosure (CVD) policy, it is not yet considered exploited. This process is intentionally designed to give you the time needed to validate the issue, develop a patch, and coordinate a responsible disclosure with them. For example, a researcher finds a bug via your bug bounty program and submits a report. You have time to fix it. The 24-hour clock only starts if you later find evidence that malicious actors are using that same vulnerability in the wild.

Navigating the complexities of the CRA doesn’t have to be a burden. Regulus provides a clear, step-by-step roadmap to turn compliance requirements into an actionable plan. Gain clarity on applicability, generate tailored documentation, and build your vulnerability management process with confidence. Visit the Regulus platform to see how we can help you meet every deadline.