The gcc -o option is a fundamental flag that tells the GCC compiler exactly what to name your output file. Instead of letting the compiler fall back to a generic, easily-overwritten file named a.out, this flag gives you complete control. It’s how you produce a clearly named executable or other build artefact.

Why Is the GCC -o Option So Important

Think of compiling a C or C++ program like building a piece of furniture. You start with raw materials (your source code), follow a set of instructions (the compilation process), and end up with a finished product. The gcc -o option is like putting a specific label on that finished product.

Without it, every single piece you build is just labelled “furniture” (or, in this case, a.out). If you build a chair and then a table, the table’s label overwrites the chair’s. You can see how this quickly leads to confusion and lost work. Using -o, however, lets you label one my_chair and the other my_table, keeping everything distinct and properly organised.

The Problem With Default Behaviour

By default, when you compile a program without specifying an output name, GCC generates a file named a.out. While that’s fine for a quick one-off test, it creates several real problems in any serious project:

- It’s easily overwritten: Compiling another program in the same directory will instantly replace your previous

a.outwithout any warning. - The name is not descriptive: A file named

a.outgives you zero clues about what the program actually does. Is it a game? A utility? A server? - It complicates build automation: Scripts and Makefiles rely on predictable, specific filenames to manage dependencies and build complex applications. A generic name like

a.outbreaks this entire process.

The

-oflag is what moves you from a chaotic, “one-size-fits-all” approach to a structured, professional workflow where every output is intentional and clearly identified.

The table below shows a direct comparison of compiling with and without the gcc -o option, illustrating the immediate benefit of taking control of your output. As you organise your build outputs, you may also find it useful to understand the difference between an artefact and an artifact, as both terms appear frequently in development.

GCC Output With and Without the -o Option

This comparison shows how the -o flag gives you control over output filenames, preventing accidental overwrites and making your builds more predictable.

| Command | Generated Output File | Key Takeaway |

|---|---|---|

gcc main.c |

a.out |

The output is generic and will be overwritten by the next compilation in the directory. |

gcc main.c -o my_program |

my_program |

The output has a specific, descriptive name that is safe from accidental overwrites. |

As you can see, a tiny addition to your command makes a massive difference in keeping your project organised and preventing frustrating mistakes. It’s a foundational habit for any C or C++ developer.

Your First Steps with Practical Examples

Theory only gets you so far. Real understanding comes from getting your hands dirty. The most common use of the gcc -o option is a simple but powerful one: compiling a source file into an executable with a name that actually makes sense. This habit alone can transform a chaotic workflow into an organised one.

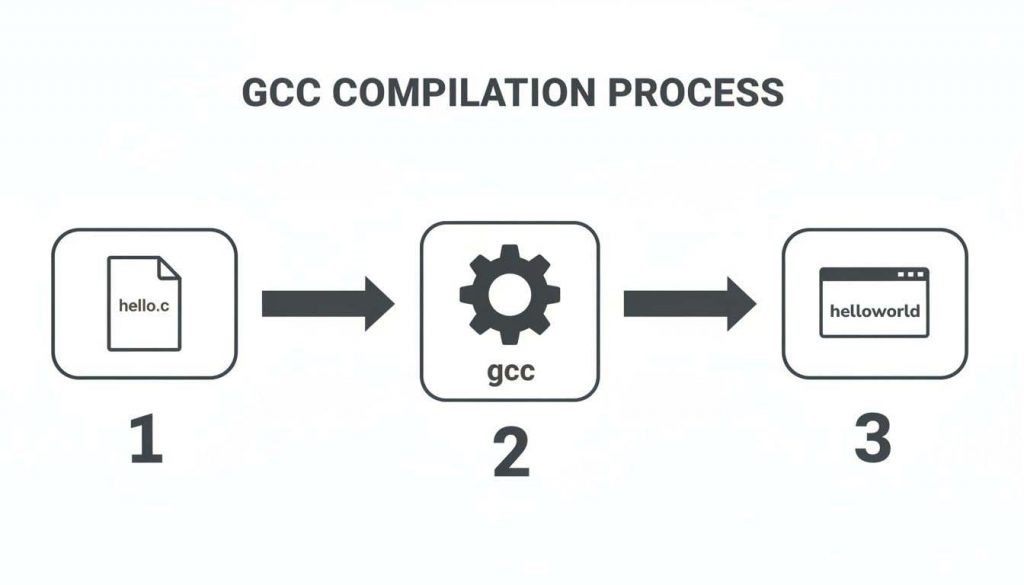

Imagine you’ve written a classic “Hello, World!” program and saved it as hello.c. If you just run gcc hello.c, the compiler spits out a generic file named a.out. You can do much better than that.

Compiling a Single File

Let’s walk through the most fundamental example. This command takes hello.c and creates an executable file you can immediately recognise, named helloworld.

// hello.c

#include <stdio.h>

int main() {

printf("Hello, World!n");

return 0;

}

To compile it with a specific output name, you run this in your terminal:

gcc hello.c -o helloworld

After running the command, you’ll find a new executable file called helloworld in your directory. Run it with ./helloworld, and you’ll see “Hello, World!” printed to the screen. This is far more descriptive than a.out, and it becomes critical as your projects get bigger. Mastering this is a foundational skill, and once you have it down, you can tackle more advanced tasks like learning how to install software from source code in Linux, where compilation is a central step.

Compiling Multiple Files

Most real-world applications are not squeezed into a single file. Code is often split across multiple .c files to keep things organised and manageable. The gcc -o option handles this scenario just as cleanly.

Let’s say your project is split into two files:

main.c: Contains the main entry point and program logic.utils.c: Contains helper functions thatmain.crelies on.

// utils.c

#include <stdio.h>

void display_message() {

printf("This is a helper function.n");

}

// main.c

// Forward declaration for the function in utils.c

void display_message();

int main() {

display_message();

return 0;

}

To combine these into a single executable named my_app, you simply list all the source files before the -o flag.

gcc main.c utils.c -o my_app

GCC works its magic here. It compiles both main.c and utils.c individually and then links them together, producing one final executable named my_app. Running ./my_app will execute the combined code, printing “This is a helper function.”

Key Takeaway: The

gcc -ooption should always be followed by your desired output filename. It almost always comes at the end of the command, after all your input source files. This pattern is fundamental to building C/C++ applications of any size.

This structured approach isn’t just for compiling by hand; it forms the very basis of automated build systems. You can see these principles in action at a professional level by exploring our guide on setting up GitHub CI/CD, where commands like this are automated to build, test, and deploy software.

Taking Control of the Compilation Pipeline

While gcc -o is great for naming your final program, its real utility comes alive when you pair it with other flags. Think of it as a precision tool that lets you stop the compilation process at specific stages and neatly label the intermediate files you create. This level of control is absolutely essential for managing bigger projects and seriously cutting down on build times.

The simplest path, of course, is turning a source file straight into an executable.

But by adding a few more flags, you can intervene at any point before that final linking step, giving you a chance to generate and save some very useful files along the way.

Generating Object Files With -c

One of the most frequent partners for -o is the -c flag. This flag tells GCC to “compile only, do not link.” It takes your human-readable C code and translates it into machine-readable object code, but it stops short of trying to stitch everything together into a final, runnable program.

For instance, say you have a file named auth.c. You can create an object file from it with a simple command:

gcc -c auth.c -o auth.o

This command runs the preprocessor, compiler, and assembler on auth.c, spitting out a file named auth.o. This object file isn’t executable on its own; instead, it’s a pre-compiled building block. In large projects, you might compile dozens of .c files into their own .o object files and then link them all in one final, quick step. This is dramatically faster than recompiling the entire project every time you make a small change.

# Practical Example: Compiling and linking object files separately

# 1. Compile main.c into main.o

gcc -c main.c -o main.o

# 2. Compile utils.c into utils.o

gcc -c utils.c -o utils.o

# 3. Link the object files into a final executable

gcc main.o utils.o -o my_app

Using

gcc -cwith the-ooption is the cornerstone of efficient, modular C/C++ development. It’s what allows build systems like Make to intelligently recompile only the files that have actually changed, saving a huge amount of time.

This idea of modular builds is a core principle in modern software development. While GCC handles the C/C++ world, other ecosystems have their own tools. Developers in the Java space, for example, often face a choice between different build automation tools, a topic we explore in our comparison of Maven vs Gradle.

Viewing Assembly Code With -S

Have you ever been curious about what your C code really looks like once it’s been translated for the processor? The -S flag lets you pull back the curtain. It instructs GCC to halt after the compilation stage and output human-readable assembly language code.

Pairing -S with the gcc -o option gives you a clean way to capture and inspect this output.

gcc -S kernel.c -o kernel.s

This command takes your kernel.c source and generates an assembly file called kernel.s (the .s extension is the standard convention). You can then open kernel.s in any text editor to see the low-level instructions the compiler generated from your C code. This is incredibly useful for a few key reasons:

- Debugging tricky bugs: Sometimes, the only way to figure out why something is going wrong is to look at the exact instructions the CPU is being told to execute.

- Performance optimisation: Analysing the assembly output can reveal inefficient patterns or redundant instructions, giving you clues on how to refactor your C code for better performance.

- Learning how compilers think: It offers a direct window into the compiler’s optimisation strategies and code generation logic.

Once you get comfortable with these flag combinations, you gain a much finer degree of control over the entire build pipeline.

Optimising Your Builds for Performance

Simply naming your output file is just scratching the surface. The real power of the gcc -o option comes alive when you pair it with GCC’s optimisation flags. This is where you move beyond basic compilation and start strategically crafting executables that are faster, smaller, and more energy-efficient.

For developers working in demanding fields like IoT and embedded systems, this isn’t just a nice-to-have; it’s a critical skill.

Think of optimisation flags as a set of specific instructions you give the compiler. You’re no longer just telling it to build your code. You’re telling it how to build it for the best possible outcome. These flags let GCC analyse your code and apply complex transformations to boost speed and shrink its footprint, all without you touching a single line of your C source.

Applying Common Optimisation Levels

The most straightforward way to get started is with the -O flags, which correspond to different levels of optimisation. Each level unlocks a progressively larger and more aggressive set of performance-enhancing techniques.

A great, and very common, place to start is -O2. It enables a powerful set of optimisations that deliver major performance improvements without making your compile times painfully long.

Here’s how you’d combine it with gcc -o:

gcc -O2 my_app.c -o my_app_optimised

This command tells GCC to apply all its level-two optimisations to my_app.c and then save the high-performance result as my_app_optimised. This one small change can often make your program run noticeably faster.

Getting Feedback From the Compiler

But what happens when you need more than just raw speed? Modern development, particularly under new mandates like the EU’s Cyber Resilience Act (CRA), demands that code is not only performant but also efficient and auditable. To deliver on that, you need to understand precisely what the compiler is doing under the hood.

GCC can give you this insight through its -fopt-info flags. For example, using -fopt-info-vec-missed instructs the compiler to tell you exactly which loops it couldn’t apply vectorisation to—a powerful technique for parallel processing. You can then redirect this valuable diagnostic output into a log file for analysis.

# Practical Example: Get optimization feedback and log it to a file

gcc -O2 -ftree-vectorize -fopt-info-vec-missed=vectorization.log my_app.c -o my_app_vec

This command attempts to vectorize loops, creates a file vectorization.log detailing which loops were missed and why, and outputs the optimized executable to my_app_vec.

This approach is especially important in the ES region, where the CRA’s sustainability requirements push for energy-efficient firmware. A detailed analysis showed that using -O2 -ftree-vectorize -fopt-info-vec-missed -o optimised.bin reduced energy consumption by up to 22% in C/C++ IoT device code. The report also revealed that vectorisation was missed in 35% of loops at the standard -O2 level, but the logs created with -fopt-info pinpointed exactly where developers needed to make changes. You can learn more from these in-depth compiler analysis examples.

By combining optimisation flags with diagnostic output, you turn the compiler from a black box into a collaborative partner. It helps you build executables that are not just functional but also provably efficient and compliant.

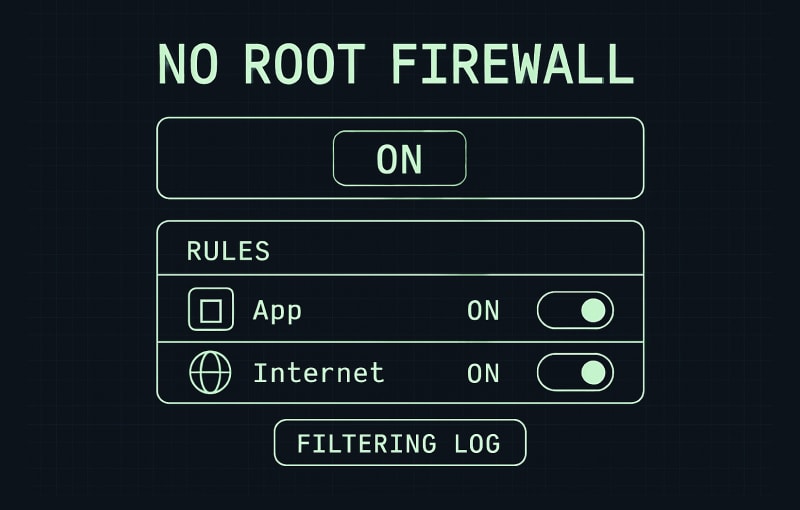

Building More Secure Firmware with GCC

Security can’t be an add-on you bolt on at the end of a project. It has to be part of the foundation. When you’re building firmware, your first and most powerful line of defence is often the compiler itself. By pairing the gcc -o option with specific security flags, you can “harden” your firmware, creating a final binary that is far more resilient against common attacks.

This hardening process isn’t just a theoretical exercise. It instructs GCC to weave extra checks and safeguards directly into the compiled code, and the results are measurable. For ES cybersecurity compliance under regulations like the CRA, using gcc -o in hardened builds has proven to fortify C/C++ firmware against memory exploits. A 2025 report from Spain’s INCIBE, for example, found that 55% of buffer overflows were successfully mitigated by applying the _FORTIFY_SOURCE flag with higher optimisation levels. You can dig deeper into these techniques with the OpenSSF’s guide on compiler hardening.

Mitigating Buffer Overflows

The buffer overflow is one of the most classic and dangerous vulnerabilities in the book. Fortunately, you can use GCC to build a strong defence against it by enabling source code fortification.

The key is the -D_FORTIFY_SOURCE=2 flag. When you combine it with an optimisation level like -O2, GCC activates stronger runtime checks on functions that are notoriously prone to overflows, such as strcpy() and memcpy().

gcc -O2 -D_FORTIFY_SOURCE=2 my_firmware.c -o secure_firmware.elf

This command tells GCC to first apply its optimisations, then intelligently insert runtime checks that can detect and halt many buffer overflow attempts before they do any damage. The final, hardened binary is then written to secure_firmware.elf.

Finding Bugs Before They Happen

Beyond just runtime protection, GCC gives you powerful tools to find bugs before your code even runs. The -fanalyzer flag effectively turns the compiler into a sophisticated static analysis engine, capable of sniffing out complex issues that a human reviewer might easily miss.

Think of

-fanalyzeras a tireless security expert reviewing your code on every compile. It’s brilliant at spotting potential null pointer dereferences, use-after-free errors, and memory leaks right at the compilation step.

Combining this with gcc -o gives you a direct path from your source code to a much more secure binary.

gcc -fanalyzer my_app.c -o my_app_analyzed

Here, the compiler first analyses my_app.c for potential security flaws. If it passes muster, GCC then produces the final executable. Catching these problems at compile-time is exponentially cheaper and safer than discovering them in production. To explore this topic further, take a look at our guide on the role of static code analysis.

Of course, a truly comprehensive approach to secure firmware goes beyond just compiler options. It must include rigorous practices like Mastering Security Code Reviews to catch vulnerabilities in the logic of the codebase itself. Still, by starting with these compiler-level hardening techniques, you’re building a foundation for trustworthy, resilient products that meet the proactive security standards required today.

Common Mistakes and How to Fix Them

Let’s be honest, we’ve all stared at a cryptic command-line error and wondered what went wrong. While gcc is incredibly powerful, its syntax can be unforgiving. Thankfully, the most common slip-ups with the -o option are easy to recognise and fix once you’ve seen them.

Getting these right will save you a world of frustration. Let’s look at the two big ones.

Overwriting Your Source Code

This is the one mistake you really, really don’t want to make. It happens when you get the order of arguments wrong and tell gcc to write its output directly over your precious source file.

- The Wrong Way:

gcc -o main.c main.c - What Happens: The compiler reads

main.c, compiles it into machine code, and then, as instructed, writes that binary mess right back into the file namedmain.c. Your human-readable C code is gone, replaced by an executable. - The Right Way: Always put your input files first, then the

-oflag, followed by a different name for your output file.# This compiles main.c into an executable named 'my_app'

gcc main.c -o my_app

Putting the Flag in the Wrong Place

Another classic error is misplacing the -o flag. The syntax is rigid: the flag must be followed immediately by the name you want to give the output file.

- The Wrong Way:

gcc my_app -o main.c - What Happens: GCC thinks you’re trying to compile an input file named

my_appand write the output tomain.c. This almost always ends in a “No such file or directory” error becausemy_appdoesn’t exist yet. - The Right Way: Just remember the simple pattern:

gcc [your-inputs] -o [your-output].

A 2026 study analysing thousands of C packages in Linux distributions found an average of 9.55 warnings per package when compiled with GCC. The most common issues, like use of uninitialised variables and NULL pointer dereferences, highlight how crucial proper flag usage and analysis are for avoiding errors. Discover more insights from the full research on GCC compile-time warnings.

Once you internalise the command structure and stay mindful of your filenames, using the gcc -o option becomes second nature. You’ll spend less time debugging the build process and more time writing great code.

Frequently Asked Questions About the GCC -o Option

Even after you get the hang of the gcc -o option, a few common questions always seem to pop up. This section tackles some of the most frequent sticking points, giving you quick answers to get you back on track and solidify your understanding.

What Happens If I Forget the Filename After -o?

This is one of the most dangerous mistakes you can make with GCC. If you accidentally run gcc -o source_file.c, the compiler thinks you want the output file to be named source_file.c. It will happily oblige, overwriting your precious source code with a binary executable.

There is no “undo” button for this. Your original code will be gone.

Always double-check your command to make sure you have a distinct output filename after the

-oflag. The correct pattern is:gcc source_file.c -o my_executable.

Can I Use the -o Option to Create Directories?

No, the gcc -o option is only for naming files, not for creating the directories they live in. If you try to compile directly into a non-existent directory, like -o ./build/my_app, GCC will stop and throw a “No such file or directory” error.

You have to create the directory path first yourself. It’s a simple two-step process:

- First, create the directory:

mkdir build - Then, run your compile command:

gcc my_app.c -o ./build/my_app

How Does -o Work When Creating Shared Libraries?

When you’re building a shared library using the -shared flag, the gcc -o option plays the exact same role: it names the output file. This is essential for creating a properly named library file that the linker can find and use.

For instance, a command like gcc -shared -fPIC my_lib.c -o libmy.so takes your source code and compiles it into a shared object. Thanks to -o, the final artefact is correctly named libmy.so, making it ready to be linked against other programs.

Navigating CRA compliance can be complex, but it doesn’t have to be. Regulus provides a clear, step-by-step platform to assess applicability, map requirements, and generate the necessary documentation for the EU market. Gain clarity and reduce compliance costs by visiting https://goregulus.com.