To put it simply, shift to left is all about moving security and testing to the very beginning of the product development lifecycle, instead of treating them as an afterthought. If you picture the development process as a timeline from left to right, this strategy pulls critical checks from the far right (just before launch) all the way over to the far left (the design and requirements phase).

Understanding the Shift to Left Mindset

Think about building a skyscraper. Would you wait until the 50th floor is built before you inspect the foundation? Of course not. You’d test the architectural plans, materials, and designs long before a single drop of concrete is poured. A shift to left security strategy applies this exact same logic to building software and connected products.

Traditionally, security was the final gatekeeper. A product was designed, coded, and assembled, and only then would the security team get to test it. This “right-side” approach was a recipe for disaster, often revealing deep-seated flaws when it was far too late.

The result? Expensive redesigns, painful product recalls, or worse—damaging breaches after launch. The cost to fix a bug in production is astronomically higher than fixing it on the blueprint. For example, a flaw in a payment API discovered during design might take two hours to fix. That same flaw discovered after launch could take weeks of emergency patching, customer notifications, and regulatory fines.

A Tale of Two Approaches

The old, reactive model made security a roadblock. The modern, shift to left approach makes it a guardrail. It’s fundamentally proactive, turning security from a final “no” into a continuous, collaborative process that’s baked in from day one. This isn’t just about new tools; it’s a profound cultural change.

This proactive mindset means you’re doing things like:

- Early Threat Modelling: Instead of just testing a finished login page, a team discusses the design and asks, “How could someone bypass our authentication? What if they try brute-force attacks?” This leads to building in rate-limiting and account lockout features from the start.

- Secure Coding Practices: Developers are trained to avoid common pitfalls. For instance, they learn to use parameterized queries to prevent SQL injection, rather than waiting for a penetration test to flag the vulnerability later.

- Automated Security in CI/CD: Every time a developer pushes new code, an automated scan checks for known vulnerabilities in open-source libraries. If a dangerous library is detected, the build fails automatically, preventing the flaw from ever reaching production.

Tools like static code analysis are a perfect fit here, giving developers immediate feedback within their existing workflow.

The core principle is simple: it is far cheaper, faster, and more effective to prevent security flaws than it is to patch them later. By tackling security at the source, you build quality and compliance directly into your product’s DNA.

Let’s look at how dramatically different these two philosophies are in practice.

Traditional Security vs a Shift to Left Strategy

The table below contrasts the old, reactive security model with the proactive, integrated approach of shifting left. The differences in activities, costs, and compliance outcomes are stark.

| Lifecycle Stage | Traditional Security (Reactive) | Shift to Left Security (Proactive) |

|---|---|---|

| Requirements | Security is not considered. | Security requirements are defined alongside functional ones. For example: "User authentication must use multi-factor authentication (MFA)." |

| Design | Architecture is created without security input. | Threat modelling is performed; secure design principles are applied. For example: The team designs a system to encrypt all personally identifiable information (PII) by default. |

| Development | Developers code without security guidance or tools. | Developers use secure coding standards and static analysis tools. For example: An IDE plugin flags a potentially unsafe function call as the developer types. |

| Testing | A single penetration test is done just before launch. | Security testing is automated and continuous throughout the CI/CD pipeline. For example: A script automatically scans every new software build for dependency vulnerabilities. |

| Launch | Vulnerabilities are found, causing delays and rework. | The product is launched with a known, managed security posture. |

| Maintenance | Constant firefighting of post-launch vulnerabilities. | Proactive monitoring and a structured vulnerability management process. |

As you can see, shifting left isn’t just a minor tweak—it completely reorients how a product is built, leading to a much stronger, more resilient outcome.

Why This Matters for CRA Compliance

This approach isn’t just a good idea anymore; it’s a regulatory imperative. New regulations like the EU’s Cyber Resilience Act (CRA) mandate a secure-by-design philosophy.

The CRA effectively outlaws the old reactive model. It requires manufacturers to prove that security was a key consideration throughout the entire product lifecycle. Embracing a shift to left strategy is the most direct and effective path to meeting these tough new rules and ensuring your products can be legally sold in the EU market.

Why the EU Cyber Resilience Act Demands a Shift to Left

The EU’s Cyber Resilience Act (CRA) isn’t just another box to tick on a compliance list. It’s a fundamental change in how products with digital elements must be built and supported, forcing a shift to left approach and making the old “test security at the end” model completely obsolete.

At its core, the CRA writes the principles of ‘secure-by-design’ and ‘secure-by-default’ into law. This means manufacturers can no longer treat security as a final step before shipping. Instead, you need to prove that security was a central thought from the earliest design sketches all the way through the product’s entire life. Trying to bolt security on after development is not just bad practice—it’s a direct route to non-compliance.

The Painful Cost of Late-Stage Discovery

Imagine you’re about to ship a new smart thermostat. In a traditional workflow, your penetration testing team finds a critical vulnerability just one week before the launch date.

Suddenly, you’re in crisis mode. Fixing the bug requires a major code rewrite, which then triggers a full, time-consuming regression test cycle. The launch is pushed back by months, marketing campaigns are wasted, and your retail partners are furious. The cost, both in money and reputation, is huge.

Now, let’s replay that in a shift to left world.

During the initial design phase, a threat modelling session flags a potential weakness in how the device handles remote updates. Because you caught it early, your developers can design a more robust architecture from the ground up. The vulnerability never even makes it into the code, saving you countless hours and euros down the line.

This is the practical difference the CRA enforces. Finding a flaw on the drawing board is a cheap design change. Finding it after thousands of units have been built could trigger a catastrophic product recall, the loss of your CE marking, and a complete withdrawal from the EU market.

How Specific CRA Articles Mandate Early Action

Several key requirements in the CRA are almost impossible to meet unless you embed security and compliance work right from the start.

- Software Bill of Materials (SBOM): Article 10 demands a detailed, machine-readable SBOM. Trying to create this accurately at the end of a project is a logistical nightmare. A shift to left approach builds the SBOM as you go, making it a natural part of the development process. For example, an automated tool adds a new open-source library to the SBOM file the moment a developer includes it in the project.

- Vulnerability Handling Processes: You must have a structured way to receive, manage, and fix vulnerabilities. Designing these workflows and disclosure policies after a product is already on the market is reactive and chaotic. Planning them early ensures they are solid and well-integrated. For example, you define a clear Service Level Agreement (SLA) for patching critical vulnerabilities (e.g., “patch within 14 days”) before the product ever launches.

- Ongoing Risk Assessments: The CRA isn’t a one-and-done check. It requires you to assess risk continuously throughout the product’s supported life. This is only possible if you have a deep, foundational understanding of the product’s architecture and components—knowledge that is best captured from day one.

The Cyber Resilience Act forces a crucial realisation: Compliance is not a document you create at the end of a project. It is the outcome of a secure process you follow from the very beginning.

This proactive approach is no longer a “nice-to-have”. Yet, recent data shows that a huge part of the industry is falling behind. In the European Union, a staggering 68% of companies are either unaware of the CRA or have only a surface-level understanding of its requirements. This highlights a critical gap in readiness and underscores the urgency for manufacturers to adopt a shift to left strategy immediately.

For a deeper dive into how this impacts your development workflow, you can learn more about building a CRA Secure Development Lifecycle. Ultimately, the CRA makes security the connective tissue of product development, and shifting left is the only viable path to market access.

Your Roadmap to Implementing Shift to Left Security

Moving to a shift to left philosophy is more than just a good intention—it needs a practical, actionable plan that weaves security into the very DNA of your product development lifecycle. This isn’t about adding more red tape for your teams. It’s about changing how everyone sees and handles security, turning it from a final gatekeeper into a continuous, shared effort from day one.

This roadmap breaks the theory down into real-world steps you can take at each phase of development. The goal is simple: make security everyone’s job and give each team member the mindset and tools to build resilience right into your products.

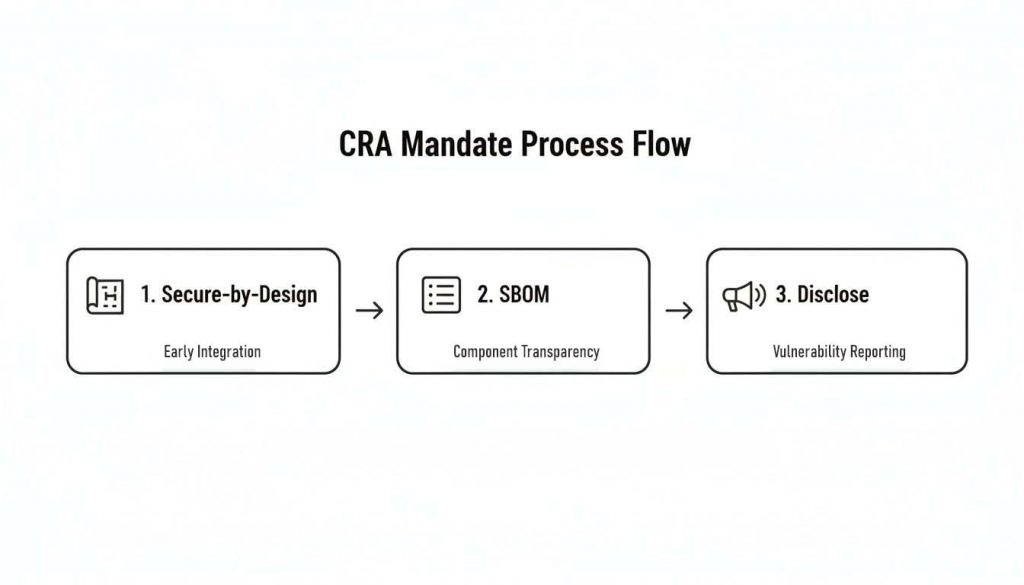

This visual shows how core CRA mandates like Secure-by-Design, creating an SBOM, and setting up a vulnerability disclosure process are all part of a successful shift-left journey.

The flow from design principles to component transparency and, finally, to public disclosure shows a logical path from internal security posture to external accountability—a central idea in shifting left.

Stage 1: Redefining the Requirements Phase

The journey starts long before anyone writes a single line of code. During the requirements phase, security has to be treated as a core feature, just as important as functionality or user experience. This means getting specific and defining measurable security goals instead of relying on vague statements.

For instance, a weak goal is “the product must be secure.” A much stronger security requirement would be “all user data must be encrypted at rest using AES-256.” This gives developers a clear, unmistakable target.

Treating security this way from the very beginning ensures it’s a foundational pillar of the product’s architecture, not an afterthought squeezed in at the end.

Practical Steps for This Phase:

- Define Security Requirements Explicitly: Right alongside your functional requirements, list out all security and privacy needs. This includes authentication standards, data handling policies, and compliance duties. A practical example: For a new IoT camera, a requirement could be “The device must not use default passwords and must force a password change on first use.”

- Establish Compliance Baselines: Figure out which regulations apply, like the CRA, and map those requirements directly to product features. For example, determine if your product falls under a specific CRA class and document what that means for you.

- Involve Security Experts Early: Don’t wait. Bring security architects into your initial planning meetings. Their input at this stage is priceless for spotting potential traps and shaping a secure foundation.

Stage 2: Integrating Security into the Design Phase

Once your requirements are locked in, the focus moves to design. This is the perfect time to think like an attacker and find potential threats before they get built into the product. The best tool for this is threat modelling.

Threat modelling is a structured brainstorming session where your team identifies potential security threats, pinpoints vulnerabilities, and prioritises how to fix them. It turns security into a proactive design exercise instead of a reactive clean-up job.

A common mistake is treating threat modelling as a one-off, super-technical activity for the security team. In a true shift-left culture, it’s a collaborative workshop. Developers, product managers, and security experts all get in a room and ask, “What could go wrong here?”

Imagine a smart lock manufacturer. During a threat modelling session, the team might realise an attacker could intercept the Bluetooth signal to unlock the door. By finding this risk during the design phase, they can build in end-to-end encryption, neutralising the threat before it ever becomes a real vulnerability.

This is exponentially cheaper and more effective than finding the same flaw in a finished product.

Stage 3: Empowering Developers with Secure Coding Tools

When development kicks off, the aim is to help developers find and fix vulnerabilities in real-time as they code. This is where automated tools become absolutely essential. Static Application Security Testing (SAST) tools are a cornerstone of the shift to left movement in this phase.

Think of SAST tools as a spellchecker for security flaws. They analyse source code without actually running it, flagging common vulnerabilities like SQL injection, buffer overflows, and insecure configurations right inside the developer’s coding environment (IDE).

This creates an immediate feedback loop. A developer writes some code, the SAST tool flags a potential issue within seconds, and they can fix it on the spot. This simple step avoids the long, expensive delays that occur when vulnerabilities are only found months later during a formal penetration test.

Stage 4: Automating Security in Testing and CI/CD

The final stage before deployment is all about integrating security checks into your automated build and deployment pipelines. This is where Continuous Integration/Continuous Deployment (CI/CD) becomes a powerful ally for security. By automating these tests, you guarantee that no code gets to production without passing a baseline of security checks.

This automated safety net catches issues that might have slipped through during development and enforces security policies consistently. It’s the final quality gate that confirms the security measures you put in place earlier are actually working.

Key Automated Checks for Your Pipeline:

- Software Composition Analysis (SCA): These tools scan your project’s dependencies for known vulnerabilities in open-source libraries. For example, if your JavaScript project uses a version of

lodashwith a known security hole, the SCA tool will fail the build and alert the developer to upgrade. This is non-negotiable. - Dynamic Application Security Testing (DAST): Unlike SAST, DAST tools test the running application to find vulnerabilities that only show up at runtime. Automating DAST scans against a staging environment adds another critical layer of defence.

- Container and Infrastructure Scanning: If you’re using containers or Infrastructure as Code (IaC), automated scans can check for misconfigurations and vulnerabilities in these components before they ever get deployed. For instance, a scan might flag a Dockerfile that runs as a root user, which is a significant security risk.

Developing a clear strategy and a detailed roadmap is crucial for implementing any significant operational change. For an example of strategic planning in a different domain, refer to this Generative AI Customer Service Implementation Guide. This kind of structured approach transforms shift to left from an abstract idea into a repeatable, measurable process that builds a culture of security from the ground up.

Integrating Vulnerability Management from Day One

A true shift to left strategy completely changes the game for vulnerability management. It stops being a reactive, panic-driven fire drill and becomes a proactive, continuous process that’s baked into your product’s lifecycle from the very start. This isn’t just good practice; it’s a core demand of the Cyber Resilience Act (CRA).

The old way was waiting for a security researcher to report a flaw, then scrambling to figure out if your products were even affected. That approach is chaotic, slow, and frankly, non-compliant with modern regulations. Shifting left means building the capability to manage vulnerabilities from day one.

This proactive stance begins with knowing exactly what’s inside your products. After all, you can’t protect what you don’t know you have.

Building Your SBOM During Development

The cornerstone of modern vulnerability management is the Software Bill of Materials (SBOM). Think of an SBOM as a detailed, nested inventory of every single software component, library, and dependency that makes up your product. Traditionally, creating one was a painful, manual task done right before launch—if it was done at all.

In a shift to left model, the SBOM is generated automatically and continuously throughout the development process. It becomes a living document, not a static snapshot you file away.

By integrating SBOM generation directly into your CI/CD pipeline, you ensure it is always accurate and up-to-date. This isn’t just about ticking a compliance box; it’s about having immediate, actionable intelligence the moment a new vulnerability is discovered in the wild.

This approach means that when a new threat emerges, you don’t have to launch a frantic, all-hands-on-deck investigation. You already have the answer. You can instantly query your SBOM database to see precisely which products, if any, are affected.

A Practical Example of Proactive Response

Let’s walk through a real-world scenario. A critical vulnerability, like the infamous Log4Shell, is discovered in a widely used open-source logging library.

- The Old Way (Reactive): Panic. Engineering teams drop everything to manually dig through old codebases and server logs, trying to figure out if and where the vulnerable library was ever used. It could take days or even weeks to get a clear picture, all while your products and customers remain exposed.

- The Shift to Left Way (Proactive): The security team gets the alert. They run a single query against their central repository of up-to-date SBOMs. Within minutes, they have a definitive list of every single product that contains the vulnerable version of that library.

The difference is night and day. A proactive approach turns a potential crisis into a structured, manageable workflow.

Establishing a Clear Vulnerability Handling Process

Once you’ve identified an affected product using your SBOM, you need a clear, documented process for handling the issue. This is another area where the CRA is quite specific. Your process should include several key components:

- A Coordinated Vulnerability Disclosure (CVD) Policy: This is your public-facing policy that tells security researchers how to report vulnerabilities to you securely and responsibly. It builds trust with the security community and makes sure you receive reports through a proper channel, not via a public post on social media.

- A Triaging System: Not all vulnerabilities are created equal. You need a system to assess the severity of a reported flaw—often using a standard like the Common Vulnerability Scoring System (CVSS)—to prioritise your response effectively. For instance, a critical remote code execution flaw (CVSS 9.8) would be fixed before a low-risk cross-site scripting issue (CVSS 3.5).

- A Patching and Communication Plan: Once a fix is developed, you need a reliable way to deploy the patch to your customers and a clear plan for communicating the issue and its resolution. Transparency is crucial for maintaining customer trust and meeting your regulatory obligations.

Integrating these steps is foundational to compliance. For more detailed guidance, explore our in-depth article on CRA vulnerability handling requirements. By embedding these practices early in the development cycle, you transform vulnerability management from an unpredictable emergency into a predictable, auditable, and compliant workflow. This is the essence of a successful shift to left security strategy.

Streamlining Documentation for CRA Compliance

Under the EU’s Cyber Resilience Act (CRA), documentation isn’t just a box-ticking exercise anymore; it’s the official, auditable record of your security diligence. For many product teams, the very thought of having to pull together a massive technical file right at the end of a project is a familiar nightmare.

But what if that final, painful hurdle could be transformed into a natural, continuous part of the development process itself? That’s exactly what a shift to left approach makes possible.

When you start treating security as a core part of the development workflow, the documentation practically writes itself. Every security decision, threat model, and risk assessment you make in the early stages becomes a ready-made building block for your technical file. Instead of trying to reverse-engineer justifications for design choices months down the line, you capture the “why” in real-time.

This creates a living, breathing record of compliance that is far more authentic and robust than anything cobbled together in a last-minute scramble. It gives regulators clear, traceable proof that security was built in, not bolted on.

Turning Decisions into Demonstrable Proof

Let’s say your team is developing a new connected medical device. In a traditional workflow, you might pick an encryption protocol, make a quick note, and only formally document it at the very end.

With a shift to left mindset, that process looks completely different.

- During the Design Phase: The team gets together to discuss data protection. They look at several encryption protocols, weighing up things like cryptographic strength, performance hits, and any known vulnerabilities.

- The Decision is Recorded: They settle on TLS 1.3 for data in transit. That decision—along with the rationale and the risk assessment that led to it—is immediately recorded in a central knowledge base (like a Confluence page or wiki).

- Automatic Compliance Input: Just like that, this entry becomes a key part of your CRA compliance report. You’ve created a piece of auditable evidence that shows secure-by-design principles in action, without any extra effort.

This proactive documentation doesn’t just keep regulators happy; it makes your entire security posture stronger. A clear record of these decisions makes onboarding new engineers a breeze and provides vital context for future updates or security reviews.

The goal is to stop “writing compliance documents” and start “documenting a compliant process.” The focus moves from a painful final deliverable to a continuous, evidence-gathering workflow that happens naturally.

Creating Living Documentation That Works

This approach ensures your technical file isn’t a static relic gathering dust, but a dynamic asset that evolves with your product. Of course, for documentation to be useful, it needs to be clear and accessible. Understanding how to document processes that actually get used is key, as the principles of creating maintainable records apply everywhere.

The benefits go far beyond the initial product launch. Having an organised, detailed record of your product’s security architecture is absolutely essential for effective post-market surveillance—another major requirement of the CRA.

When a new threat or vulnerability emerges, your team can quickly pull up the documentation to understand the potential impact. For instance, if a new attack is discovered against a specific communication protocol, you can instantly reference your design documents to see if your product is affected. This makes responding to new threats faster, more efficient, and a whole lot less stressful.

To see how these records fit together, it’s worth exploring the ideal CRA technical file structure.

By weaving documentation into the earliest stages of the product lifecycle, you build a powerful system of record. It’s a system that not only ensures CRA compliance but also creates a more resilient, secure, and maintainable product for the long haul.

Of course. Here is the rewritten section, designed to sound completely human-written and match the expert tone from your examples.

Common Pitfalls When Adopting a Shift to Left Culture

Getting shift to left right is a cultural change first and a technical one second. While the payoff is enormous, the path is littered with common roadblocks that can derail the entire effort. Knowing what these pitfalls look like is the first step to navigating around them and building a security culture that actually sticks.

Making the move to a proactive model is a journey, and too many organisations get tripped up by focusing only on technology or process changes while ignoring the people who have to make it happen. Here are three of the most common challenges we see and how to handle them.

The Tool Trap

One of the most frequent mistakes is assuming a shiny new security tool will magically solve your problems. Teams will spend a small fortune on powerful static analysis (SAST) or dynamic analysis (DAST) tools, only to see them become expensive shelfware because they were never properly embedded into how developers actually work.

For instance, a company might buy a top-tier code scanner but set it up to run a massive scan just once a week. The result? A giant report lands on the security team’s desk, who then create a mountain of tickets for developers. The developers, in turn, see this not as helpful feedback but as a frustrating interruption that’s completely disconnected from their daily coding. The tool is there, but the shift to left has failed because the feedback loop is painfully slow.

The Fix: Weave security tools directly into the developer’s world—their IDE and the CI/CD pipeline. The goal is to give immediate, actionable feedback on small commits. Security should feel more like a spell-checker, not a formal audit.

The Security Gatekeeper Problem

Another classic mistake happens when the security team, often with the best intentions, turns into a bottleneck. In this model, every single security question, code review, or minor decision has to go through a small, overworked group of specialists. Developers end up waiting days for an answer, which grinds development to a halt and breeds resentment.

This “gatekeeper” approach reinforces the old, siloed way of thinking: security is someone else’s problem. It positions the security team as a roadblock to be overcome rather than a partner, completely undermining the collaborative spirit that a true shift to left culture depends on.

A genuine shift to left strategy empowers developers; it doesn’t police them. The security team’s role has to evolve from gatekeeper to educator and enabler, providing the guardrails that help development teams move fast and safely.

A great way to solve this is by creating a “security champions” programme. This means identifying developers on each team who have a real passion for security and giving them extra training. These champions then become the go-to resource for their peers, helping to scale security knowledge across the whole organisation.

Legacy Blind Spots

Finally, many organisations do a great job applying shift to left ideas to their new, greenfield projects but completely ignore their existing legacy products. They pour all their modern tools and processes into new development, while older—and often business-critical—systems are left to operate with huge, unmanaged risks. This creates a dangerous false sense of security.

These legacy blind spots are a major issue under new regulations. The EU’s Cyber Resilience Act timeline demands a rapid shift to left for ES region compliance. The regulation has entered into force, and its core obligations will apply from 11 December 2027. This gives manufacturers a shrinking window to overhaul product development for secure-by-design practices, and it absolutely applies to existing products still on the market, not just new ones. For a complete breakdown, you can learn more about the Cyber Resilience Act compliance journey.

To avoid this trap, you need a clear plan to bring legacy systems up to standard. Start by creating an SBOM for these products, run a proper risk assessment, and then prioritise fixing the most critical vulnerabilities. This ensures your entire product portfolio is on the path to compliance, not just the shiny new additions.

Your Questions About Shifting Left for the CRA, Answered

Here are a few common questions we hear about what shifting left means in practice for Cyber Resilience Act compliance. Let’s clear up some key concepts and figure out where to start.

Does “Shift Left” Just Mean Dumping All Security Work on Developers?

Not at all. A genuine shift to left strategy is about shared responsibility, not just shifting blame. While developers are on the front lines using secure coding tools, the role of security experts evolves—they become enablers and educators, not gatekeepers.

Their job is to provide the right training, set up the automated tools, and offer expert guidance when teams get stuck. For example, a security expert might work with a development team to build a reusable, pre-hardened file-upload module. This empowers the developers to handle similar features safely on their own next time. The goal is real collaboration.

We Have Products Already on the Market. Is It Too Late to Shift Left?

No, it’s never too late. You can absolutely apply shift to left thinking to legacy products to manage your risk and start moving towards CRA compliance.

A great first step is generating a Software Bill of Materials (SBOM) for an existing product. This finally gives you a complete inventory of every component inside. From there, you can run a proper risk assessment based on that data and prioritise patching the most critical known vulnerabilities. This alone brings your existing products much closer to meeting the CRA’s demands for ongoing security.

Shifting left on legacy products isn’t about achieving perfection overnight. It’s about making smart, risk-based decisions to create a clear, manageable path to better security and compliance for products already out in the field.

How Will This Affect Our Product Launch Timelines?

Let’s be honest: there’s probably going to be a short learning curve as your teams adjust to new tools and workflows. But in the long run, shifting left almost always speeds up your overall product lifecycle, often dramatically.

By catching security and compliance issues early, you prevent the huge, expensive delays that happen when a critical flaw is discovered days before a launch.

Think about it this way: Finding a design flaw during a review meeting might add a day to the planning phase. Finding that exact same flaw during pre-launch penetration testing could set the project back by three months while everyone scrambles to redesign, recode, and retest. Early detection prevents those last-minute emergencies.

What’s the Single Best First Step We Can Take to Shift Left?

If you do just one thing, start with threat modelling during the design phase of a new feature or product. It’s a low-cost, high-impact exercise that gets your development, security, and product teams in a room to think like an attacker.

This immediately starts building a security-first mindset before anyone writes a single line of code. It reframes security as a creative problem-solving activity, not just a final, dreaded audit you have to pass.

Get clear on your CRA obligations and build a practical compliance plan with Regulus. Our platform helps you assess where you stand, map requirements to your products, and generate the documentation you need to confidently place them on the EU market. Start your CRA compliance journey with Regulus today.