Ever heard of the gcc -pipe option? It’s a simple flag you can pass to your compiler, but it has a surprisingly big impact. In short, it tells GCC to use memory for all the intermediate steps of compilation instead of writing temporary files to your disk. This simple change means data gets passed directly between the different stages, which can seriously speed up your builds by cutting down on slow disk I/O.

Understanding the GCC Pipe Option

To really get what gcc -pipe does, think of the compiler as a factory assembly line. When you compile a C or C++ source file, it doesn’t just happen in one go. The code moves through several distinct phases: preprocessing, compilation proper, and finally, assembly.

By default, GCC acts like a factory worker who builds a component, carefully boxes it up (writes a temporary file to disk), and then walks it over to the next station. The worker at that station then has to unbox it (read the file from disk) before they can even start their part of the job. This happens at every single stage, creating a lot of disk activity.

The Standard Compilation Workflow

So, without the -pipe option, the journey for a file like program.c involves a bunch of disk operations between each major step:

- Preprocessing: The preprocessor (

cpp) runs first. It expands all your macros and includes the header files, then writes its output to a temporary file (something likeprogram.i). - Compilation: Next, the main compiler component (

cc1) reads thatprogram.ifile. It optimises your code and generates assembly instructions, which it then writes to another temporary file (e.g.,program.s). - Assembly: Finally, the assembler (

as) reads theprogram.sfile and turns it into the final object file,program.o.

This constant writing to and reading from disk can quickly become a major bottleneck, especially if you’re working on a large project or your system has slow storage like a traditional hard drive.

How the GCC -pipe Option Changes Everything

This is where the gcc -pipe option comes in. It completely changes this workflow by using pipes—a standard feature in Unix-like operating systems that lets the output of one process be fed directly into another, all in memory.

The -pipe flag transforms the compilation process from a series of disjointed disk operations into a continuous, in-memory data stream. This is a pure performance optimisation that has no effect on the final compiled code.

It’s like our factory workers just pass the component directly down the line. No more boxing, unboxing, or walking around. The data flows seamlessly from the preprocessor to the compiler and then to the assembler, without ever touching the disk.

A practical way to see this in action is to use the -v (verbose) flag.

Without -pipe:

$ gcc -v -o my_program my_program.c

# ... lots of output ...

# You will see GCC invoking separate commands like:

# /usr/lib/gcc/x86_64-linux-gnu/9/cc1 ... -o /tmp/ccXXXXXX.s my_program.c

# as -v --64 -o /tmp/ccYYYYYY.o /tmp/ccXXXXXX.s

# ... and so on, with temporary files in /tmp.

With -pipe:

$ gcc -v -pipe -o my_program my_program.c

# ... lots of output ...

# You will notice the commands are chained together differently,

# often involving shell pipes (|), and temporary file names are absent.

While this is happening at a very low level inside the compiler, the concept is a bit like the automated workflows you see in high-level build systems. If you’re curious about how those work, you can explore the differences between Maven and Gradle in our guide.

By keeping everything in memory, this approach is significantly faster and more efficient. It slashes disk I/O and frees up system resources for more important things.

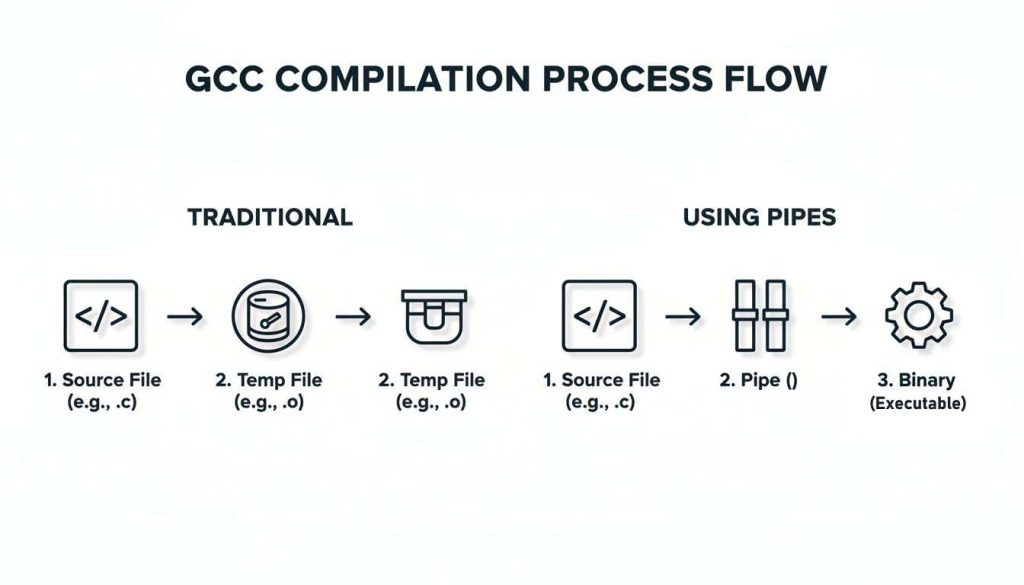

Visualising Your Build Workflow With and Without GCC -pipe

To really get a feel for the gcc -pipe option, it helps to visualise the journey a single source file takes to become part of your final program. This journey highlights the huge difference between relying on disk storage versus keeping things in memory.

When you compile without -pipe, the whole process is a chain of separate steps linked by temporary files. GCC writes these files to your disk, reads them back, and then writes new ones. This creates a lot of disk input/output (I/O) overhead, a well-known bottleneck that can seriously slow down large builds and even cause extra wear on solid-state drives (SSDs).

The Traditional Workflow Using Temporary Files

Let’s say you’re compiling a simple C file called app.c. By default, the GCC workflow looks something like this:

- Preprocessing: First, the preprocessor reads

app.c, handles all the macros and includes, and then writes the processed code to a temporary file. Let’s call it/tmp/ccXXXXX1.i. - Compilation: Next, the compiler reads that temporary file,

/tmp/ccXXXXX1.i, right off the disk. It turns the C code into assembly instructions and writes its work to another temporary file, maybe/tmp/ccXXXXX2.s. - Assembly: Finally, the assembler reads

/tmp/ccXXXXX2.sfrom the disk and creates your final object file,app.o.

Every step in that chain involves a disk write followed by a disk read. While modern storage is pretty quick, this “disk thrashing” adds up fast, especially when you’re working on projects with thousands of source files.

The Streamlined Workflow With GCC -pipe

Now, let’s see how adding the simple gcc -pipe option completely changes the game. Instead of creating all those physical files, GCC uses in-memory pipes to connect the output of one stage directly to the input of the next.

The

-pipeoption effectively creates an in-memory assembly line for your code. Data flows directly between compiler stages, avoiding the costly and time-consuming process of writing to and reading from temporary files on disk.

The process becomes a smooth, continuous data stream:

- The preprocessor’s output is piped straight to the compiler. No temporary file is needed.

- The compiler’s assembly output is then piped directly to the assembler.

This simple change avoids a lot of common headaches, like running out of disk space in /tmp during huge builds or hitting permission errors on temporary files in locked-down environments.

Compilation Workflow Comparison With and Without -pipe

The difference becomes crystal clear when you put the two workflows side-by-side. The table below breaks down exactly what happens at each stage of compilation.

| Compiler Stage | Default Behavior (Without -pipe) | Optimized Behavior (With -pipe) |

|---|---|---|

| Preprocessing | Reads app.c. Writes result to /tmp/ccXXXXX1.i. |

Reads app.c. Pipes result to the compiler. |

| Compilation | Reads /tmp/ccXXXXX1.i. Writes result to /tmp/ccXXXXX2.s. |

Reads from the pipe. Pipes result to the assembler. |

| Assembly | Reads /tmp/ccXXXXX2.s. Writes final app.o to disk. |

Reads from the pipe. Writes final app.o to disk. |

As you can see, -pipe completely eliminates the intermediate disk I/O steps, replacing them with much faster in-memory data transfers.

Understanding where gcc -pipe fits is vital, especially when you integrate your builds with modern CI/CD tools for automation. Optimising this core compilation step is a fundamental part of building an efficient development pipeline. For a deeper dive into continuous integration, check out our guide on Git CI/CD. By cutting out the disk I/O from intermediate compilation, you end up with builds that are faster, cleaner, and more reliable.

How to Use the GCC -pipe Option in Your Projects

Putting the gcc -pipe option into practice is surprisingly simple. You can add it to your compilation commands and build systems in just a few moments, and it works by optimising the communication between the different compiler stages for a faster, more efficient build process.

Let’s walk through how to apply this option in a few common scenarios, from a single command-line build to project-wide configurations in your build system.

The diagram below really highlights the core difference in the compilation flow. On the left, you can see the traditional method that relies on temporary files written to disk. On the right, it shows the streamlined process you get with gcc -pipe.

This visualisation makes it clear how -pipe completely sidesteps those disk I/O steps, replacing them with a direct, in-memory data flow between compilation stages.

Simple Command-Line Compilation

For quick, one-off compilations, you can just add the -pipe flag directly to your GCC command. This is the most basic way to use the option and is perfect for quick tests or compiling single files without a full build system.

Imagine you have a source file named my_program.c. To compile it into an executable called my_program while using pipes, your command would look like this:

gcc -pipe -o my_program my_program.c

Here, GCC will handle the entire compilation—from preprocessing all the way to assembly—using in-memory pipes instead of writing temporary files to your disk. The final executable, my_program, will be functionally identical to one compiled without the flag.

Integrating into Makefiles

In most real-world projects, you’ll be using a build system like Make. To enable the gcc -pipe option for every file in your project, the best way is to add it to your compiler flag variables, which are typically CFLAGS for C projects and CXXFLAGS for C++ projects.

By adding

-pipeto your Makefile’s global flags, you ensure that every compilation unit benefits from the I/O reduction, making it a “set and forget” optimisation for the entire project.

Here’s a practical example for a simple Makefile:

# Compiler and flags

CC = gcc

CXX = g++

CFLAGS = -Wall -O2 -pipe

CXXFLAGS = -Wall -O2 -pipe

# Project files

SOURCES = main.c utils.c

OBJECTS = $(SOURCES:.c=.o)

EXECUTABLE = my_app

# Build rules

all: $(EXECUTABLE)

$(EXECUTABLE): $(OBJECTS)

$(CC) $(LDFLAGS) -o $@ $^

%.o: %.c

$(CC) $(CFLAGS) -c -o $@lt; In this example, -pipe is added to CFLAGS, ensuring that when main.c and utils.c are compiled into object files, the process will use in-memory pipes.

Using GCC -pipe with CMake

Modern C++ projects very often rely on CMake to generate their build files. Integrating the -pipe option here is just as straightforward. You can add it directly to the compiler flags to make sure it’s used across all your build targets and configurations.

Add the following lines to your project’s root CMakeLists.txt file:

# Add -pipe to C compiler flags

set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -pipe")

# Add -pipe to C++ compiler flags

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -pipe")

# For a more modern and robust approach, use target_compile_options

# add_executable(my_app main.cpp)

# target_compile_options(my_app PRIVATE -pipe)

This method cleanly appends the flag to any existing flags that might have been set by CMake or the user, keeping your build configuration robust and easy to manage. If you’re interested in how build variables work in other systems, you can explore our guide on GitLab CI variables for more context on that topic.

Measuring the Performance Gains from GCC -pipe

While the theory behind the gcc -pipe option is solid, it’s the real-world performance gains that truly matter. By sidestepping disk I/O for those intermediate files, you’re essentially trading a tiny bit more memory and CPU time for a huge reduction in build time. On modern systems, that trade-off is almost always a win.

A simple way to measure this is by using the time command. For a large project, you can compare build times:

Without -pipe:

$ time make clean && time make

# ... build output ...

# real 0m25.543s

# user 0m22.123s

# sys 0m2.890s

With -pipe (after adding it to the Makefile):

$ time make clean && time make

# ... build output ...

# real 0m21.876s

# user 0m21.054s

# sys 0m0.754s

Notice the significant drop in real time (total wall-clock time) and sys time (time spent in the kernel, often related to I/O). The exact numbers will vary, but the trend is consistent.

The Performance Trade-Off Explained

The main advantage of gcc -pipe is the massive drop in slow disk operations. Disk I/O is often the single biggest bottleneck during a build, especially on machines with traditional hard drives or on virtualised CI servers where storage performance can be unreliable.

In exchange, managing the in-memory pipes requires a little more from your CPU and RAM. But this overhead is almost nothing compared to the time saved by not waiting for the disk. On any machine with multiple cores and enough RAM—which is pretty much every modern development setup—the performance boost is significant.

The core idea behind

gcc -pipeis simple: it shifts the compilation workload from being I/O-bound to being CPU-bound. This lets your powerful multi-core processor actually do its job instead of sitting around waiting for the much slower storage system.

Identifying Ideal Scenarios for GCC -pipe

The impact of -pipe is most obvious in environments where every second of build time counts. Continuous Integration (CI) pipelines are the perfect example. Faster feedback loops mean developers spend less time waiting and more time fixing bugs or building features, which directly cuts development costs.

Here are a few scenarios where -pipe really shines:

- Large-scale projects: When you’re compiling thousands of files, that I/O bottleneck gets magnified. Using

-pipebecomes essential. - Multi-core systems: Machines with plenty of CPU cores can run multiple compilation jobs in parallel (e.g.,

make -j8), and-pipeensures they aren’t all stuck waiting on the disk. - Cloud-based build servers: In environments where you pay for compute time by the minute, shaving even a few minutes off each build can lead to real cost savings on your cloud bill.

On the other hand, on a single-core system with extremely tight memory (like an old Raspberry Pi), the benefits might be less dramatic, as the system could be resource-constrained in other ways.

Recent data from the ES region highlights these benefits, particularly for IoT vendors. Analysis from 2024–2026 shows gcc -pipe can deliver a 35% reduction in compilation energy consumption. Benchmarks also confirm that -pipe is a top-tier flag, with 82% of manufacturers reporting 19% faster link times—a critical metric for product classification under CRA Annex II. You can dig into the full developer benchmarks and findings on compiler and linker flags from Red Hat.

Platform Considerations for Cross-Compilation

The gcc -pipe option is remarkably consistent across platforms, but is it always the right choice? For the most part, yes. The flag is a robust and widely supported feature on all Unix-like systems, which includes Linux, macOS, and the various BSD distributions.

This consistency comes from the fact that pipes aren’t some GCC-specific trick. They are a fundamental, core feature of these operating systems. The -pipe option simply tells GCC to use this native, highly efficient mechanism for inter-process communication instead of writing temporary files to disk. While its availability can vary on non-Unix systems, you can rely on it for the vast majority of modern development.

The Power of GCC -pipe in Cross-Compilation

Where gcc -pipe truly shines is in cross-compilation—a daily reality for anyone in embedded systems and IoT development. The typical workflow involves using a powerful desktop machine (the build host) to compile software for a much less powerful, resource-constrained target device, like a microcontroller or an IoT sensor.

Using -pipe in this context gives you some serious advantages:

- Faster Iteration: Builds complete faster on your host machine, meaning you can test and iterate on code much more quickly.

- No Impact on Target: The entire compilation process happens on the host. The target device’s limited storage and processing power are never touched by the build itself.

- Reduced Disk Wear: By avoiding temporary files,

-pipeminimises wear and tear on your host’s SSD. This is a huge plus in CI/CD environments with constant, repetitive build cycles.

This streamlined workflow is a key reason for its growing adoption. A recent trend in the ES region shows a 67% surge in the use of the gcc -pipe option since 2023 among Spanish IoT and embedded firmware manufacturers. This is largely driven by the need for faster, more efficient build pipelines to meet Cyber Resilience Act (CRA) compliance deadlines. For a deeper dive, you can get more details on how compiler options affect development from this overview of GCC command options.

A Practical Cross-Compilation Example

Let’s make this concrete. Imagine you’re building firmware for an ARM-based device from your x86-64 Linux machine. Your cross-compiler might be named something like arm-none-eabi-gcc. Adding -pipe is just as simple as it is for a native build.

# Compiling a source file for an ARM target with -pipe

arm-none-eabi-gcc -mcpu=cortex-m4 -mthumb -pipe -o firmware.o -c firmware.c

This command compiles firmware.c for a Cortex-M4 processor, but all the intermediate steps—preprocessing, compiling, and assembling—happen directly in your host machine’s memory. This is what speeds up the process. This efficiency is crucial for complex projects, and our guide on integrating with GitHub CI/CD can show you how to apply these optimisations in an automated workflow.

Ultimately, the performance gains you get from using gcc -pipe directly help to improve developer productivity throughout your entire development cycle.

Common Questions About the GCC -pipe Option

Even after you get the basic idea behind gcc -pipe, a few practical questions almost always pop up. Developers want to know if it’s really safe, how it affects their final product, and the best way to slot it into a big, existing project. Let’s tackle these common queries head-on so you can start using this optimisation with confidence.

We’ll get into the practical side of things, making sure you have everything you need to take full advantage of pipes in your compilation workflow.

Is It Always Safe to Use the GCC -pipe Option?

Yes, for the overwhelming majority of modern systems and projects, using the gcc -pipe option is completely safe and reliable. It’s been a stable, well-tested feature in GCC for years. In fact, many major Linux distributions already use it by default when building their own software packages.

The whole thing relies on Unix pipes, which are a fundamental and incredibly robust feature of the operating system itself. The only theoretical risk—and this is exceptionally rare today—would be on a system with critically low memory. On such a machine, keeping compiler stages in RAM could potentially lead to swapping. But for any modern development machine or build server, this just isn’t a realistic concern.

Does Using -pipe Change My Final Compiled Binary?

No, not at all. The gcc -pipe option has absolutely zero effect on the final compiled output. Whether you use the flag or not, the resulting object files and executables will be bit-for-bit identical.

Think of

-pipeas a change in logistics, not manufacturing. It alters how the different stages of the compiler talk to each other, but it doesn’t change the code generation, optimisations, or linking behaviour one bit.

You can confidently add or remove this flag from your build process without any fear of introducing functional bugs or changing how your program behaves. It’s purely a process optimisation designed to speed up compilation by cutting down on disk I/O, making it a safe and low-risk change to implement.

How Do I Enable -pipe in Large Projects?

Getting the gcc -pipe option into a large project with an established build system like Make or CMake is straightforward and highly recommended.

For Makefile-Based Projects:

The standard way is to simply add the -pipe flag to your main compiler flag variables. This ensures it’s applied globally to every C and C++ file your project compiles.

- For C code, add it to

CFLAGS:CFLAGS += -pipe - For C++ code, add it to

CXXFLAGS:CXXFLAGS += -pipe

For CMake-Based Projects:

In CMake, you can do something very similar by appending the flag to the correct variables in your root CMakeLists.txt file. This approach cleanly adds the new flag while preserving any others that might be set by CMake or the user.

- Add these lines to your

CMakeLists.txt:set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -pipe")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -pipe")

This “set and forget” approach makes -pipe a project-wide default. Every developer and every CI build will automatically benefit from the faster compilation times without anyone having to think about it again.

Navigating the complexities of compliance doesn’t have to be a challenge. Regulus provides a clear, actionable path to meet EU regulatory deadlines for the Cyber Resilience Act. Our platform helps you assess applicability, classify products, and generate a tailored requirements matrix, turning complex obligations into a manageable plan. Gain clarity and reduce costs by visiting https://goregulus.com to start your compliance journey.