If you sell digital products in the EU, the Cyber Resilience Act’s Article 14 is about to change your world. It introduces strict, mandatory reporting obligations for manufacturers, moving vulnerability disclosure from a voluntary practice to a legally binding requirement.

Under these new rules, you must notify authorities about any actively exploited vulnerability within 24 hours, followed by a more detailed report within 72 hours. This is a massive shift, and getting it wrong carries serious consequences.

Unpacking Your Article 14 Reporting Duties

The Cyber Resilience Act (CRA) creates a formal, EU-wide system for handling serious security issues. At the centre of this system is Article 14, which ensures key authorities get early warnings to help stop threats from spreading across the market.

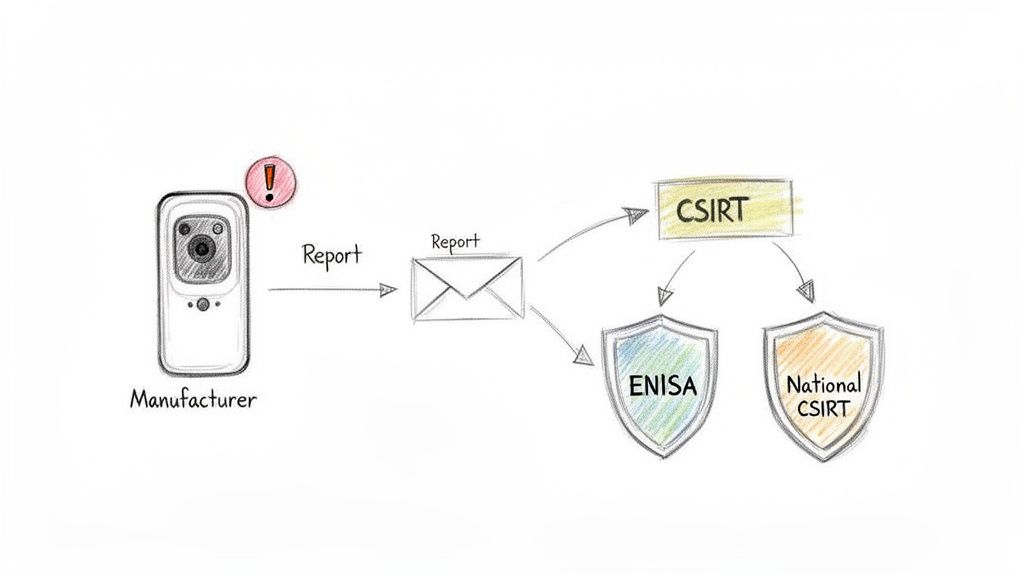

Think of it as a public health system for digital products. When a manufacturer discovers a serious, actively exploited vulnerability, their mandatory report acts as an alert. This gives the EU’s cybersecurity agency, ENISA, and national Computer Security Incident Response Teams (CSIRTs) the intel they need to coordinate a response and protect the entire market.

Who Is Responsible for Reporting?

The duty to report under Article 14 falls squarely on the manufacturer. The CRA is very clear: a manufacturer is the entity that develops a product with digital elements and sells it under its own name or trademark.

For example, if you build a connected baby monitor, develop a project management SaaS platform, or produce an industrial robot with network capabilities, you are the manufacturer. These reporting duties are a non-negotiable part of placing your product on the EU market.

What Triggers a Report?

Not every bug you find triggers a 24-hour countdown. The CRA focuses everyone’s attention on the most urgent threats. A report is only required when two specific conditions are met:

- Actively Exploited Vulnerabilities: This isn’t a theoretical weakness found during a routine scan. It’s a flaw in your product that you have solid evidence is being used by attackers in the wild. For example, receiving credible reports from multiple customers that their systems were compromised using a specific flaw in your software.

- Severe Security Incidents: This refers to an incident with a significant impact on the security of your product or your users’ systems. Think incidents that disrupt essential services, compromise networks, or cause major material damage. A practical example would be a ransomware attack that renders a hospital’s connected medical devices unusable.

Key Takeaway: The focus of Article 14 is on active, real-world threats. A vulnerability found by your internal security team doesn’t automatically start the clock. But the moment you find proof that attackers are using it against a customer, your 24-hour reporting window opens.

For instance, imagine your company makes a smart camera. You discover a flaw that could allow unauthorised access—that’s a vulnerability. If you then find credible evidence that attackers are using this exact flaw to spy on users, it becomes an actively exploited vulnerability, and your reporting obligation under Article 14 kicks in immediately.

Grasping these core concepts is the first step toward building a compliant process. The focus here is on EU-wide obligations, as specific national data is still emerging. For more detail on how these rules apply across the single market, you can read a comprehensive guide on the EU-level reporting framework from CECIMO. The next sections will build on this foundation, detailing the exact timelines and practical steps your team needs to take.

Navigating The Critical Reporting Timelines

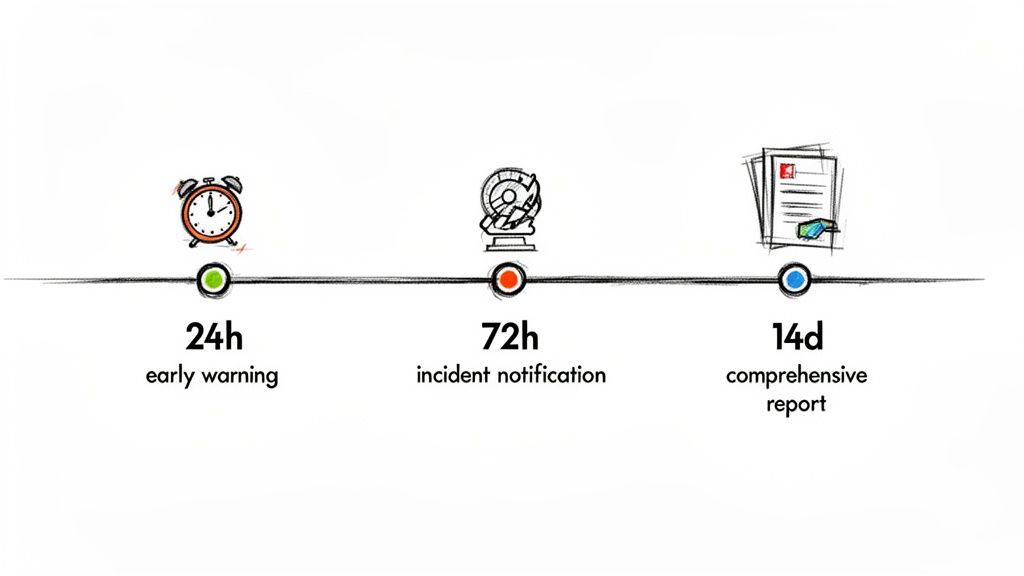

When an incident hits, the clock starts ticking. Under the Cyber Resilience Act, your reporting duties are governed by a strict, escalating series of deadlines. These aren’t just suggestions; they are firm legal obligations designed to give authorities rapid visibility into active threats.

The entire reporting cascade is triggered by one specific event: an actively exploited vulnerability. This isn’t just any bug. It’s a flaw in your product that you have reliable evidence is being actively used by attackers right now.

For instance, this could be a zero-day in your firmware being used in a ransomware attack against a customer. Or maybe your own security team confirms an intrusion that leveraged a specific weakness in your product. The moment you have that confirmation, the 24-hour countdown begins.

The First 24 Hours: The Early Warning

The first report is all about speed, not depth. You are required to notify your designated national CSIRT and ENISA without undue delay, and absolutely no later than 24 hours after becoming aware of the active exploitation.

Think of this initial alert as a flare sent up to signal a problem. You’re letting the EU authorities know a potentially widespread issue exists so they can prepare. At this early stage, nobody expects you to have all the answers.

The goal is to provide a quick heads-up that includes:

- Your identity as the manufacturer.

- The name and type of the affected product.

- A brief description of the exploitation you’ve observed.

A practical example: an email to ENISA could be as simple as, “This is an early warning from SmartGrid Solutions GmbH. Our ‘PowerFlow 3000’ energy grid controller is being actively exploited. Initial reports suggest remote unauthenticated access is possible. More details will follow.”

The 72-Hour and 14-Day Progress Reports

After that initial flare, your subsequent reports must provide progressively more detail as your investigation unfolds. To give you a clearer picture of how this works in practice, here is a quick summary of the timelines you’ll need to follow.

The table below outlines the different reporting stages and what is expected at each one.

CRA Article 14 Reporting Timelines At A Glance

| Event Type | Recipient | Deadline | Purpose |

|---|---|---|---|

| Early Warning | National CSIRT & ENISA | Within 24 Hours | Initial alert about an actively exploited vulnerability. Speed over detail. |

| Vulnerability Notification | National CSIRT & ENISA | Within 72 Hours | A more detailed update on the vulnerability, its potential impact, and any initial mitigation advice. |

| Final Report | National CSIRT & ENISA | Within 14 Days of a fix (or 1 Month for incidents) | A comprehensive report detailing the root cause, the fix, affected users, and preventative actions. |

This structured flow of information is designed to keep authorities informed as you move from initial detection to full resolution.

Let’s walk through a practical example. Imagine your company, SmartHome Innovations, makes a popular smart thermostat. Your incident response team confirms a critical vulnerability is being exploited in the wild, allowing attackers to remotely control home heating systems. This is your trigger.

Here’s how the reporting journey would look:

Within 24 Hours: SmartHome Innovations submits its early warning to its national CSIRT and ENISA. The report is simple: it identifies the company and states that its “ThermoSmart Model X” has an actively exploited vulnerability.

Within 72 Hours: The team follows up with a vulnerability notification. This report adds crucial context, detailing the nature of the flaw, its potential impact (unauthorised control of heating), and initial advice for users, like disconnecting the device from the internet until a patch is ready.

Within 14 Days: After deploying a patch and finishing its analysis, the company submits a final, comprehensive report. This document includes a full root cause analysis, the technical details of the fix, an estimate of affected users, and what actions have been taken to prevent it from happening again.

Understanding these EU-wide reporting deadlines is non-negotiable. You can learn more about the official policy on the European Commission’s digital strategy site. For a broader view, you might also be interested in our deep-dive into the full CRA compliance timeline from 2025 to 2027. This blueprint is your guide to turning a potential crisis into a controlled, compliant response.

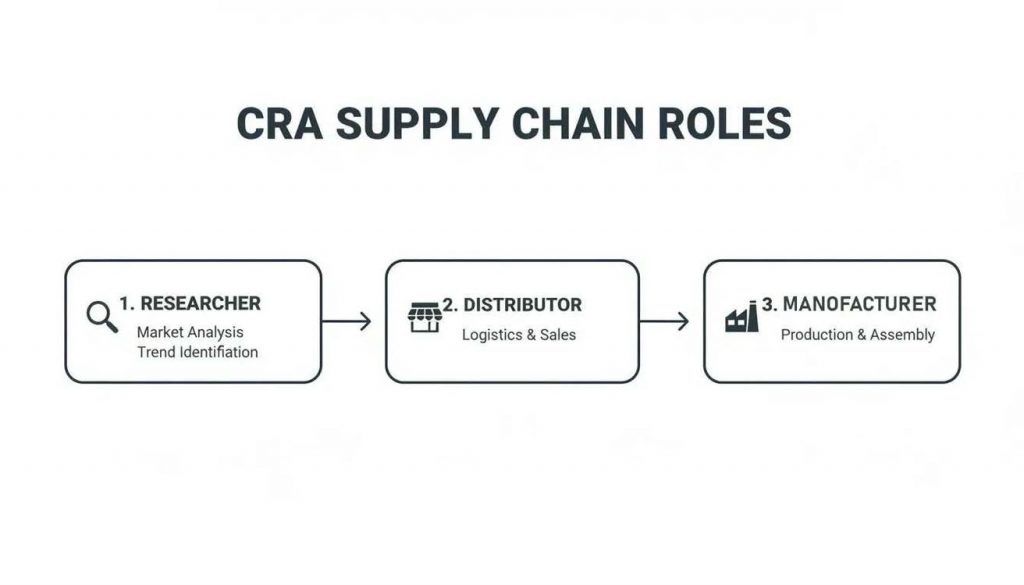

Clarifying Roles In Your Supply Chain

Achieving compliance with the Cyber Resilience Act is a team sport, not a solo mission. Your responsibility as a manufacturer doesn’t exist in a vacuum; it’s deeply connected to every other link in your supply chain. While you hold the ultimate legal duty for CRA reporting obligations under Article 14, your partners are crucial for success.

Importers and distributors are your essential eyes and ears on the ground. The CRA formally obliges them to act as a vital communication channel. If they discover or are informed of a vulnerability in one of your products, they are legally required to notify you immediately.

This creates a mandatory information-sharing network designed to get critical threat intelligence to the one entity that can actually fix it: you.

Tracing the Path of a Vulnerability Report

Let’s walk through a practical example to make this crystal clear. Imagine you’re a German manufacturer of industrial machinery that uses connected sensors. One of your machines is sold through a French distributor.

Here’s how the information flow for a vulnerability report would work:

- Discovery: An independent security researcher in France discovers a serious flaw in an open-source component used in your machine’s sensor software. They find a way to remotely access the sensor’s data.

- Initial Contact: The researcher responsibly discloses this finding to the French distributor who sold the machine, since they are the most visible local contact point.

- Distributor’s Duty: Under their own CRA obligations, the French distributor cannot sit on this information. They must immediately forward the entire report to you, the manufacturer.

- Manufacturer’s Responsibility: The moment you receive this report, your Product Security Incident Response Team (PSIRT) takes over. You analyse the vulnerability, confirm its severity, and determine if it’s being actively exploited.

- Official Reporting: If you confirm active exploitation, the final responsibility falls squarely on your shoulders. You must fulfil the official CRA reporting obligations under Article 14 by sending the 24-hour early warning to ENISA and your national CSIRT, followed by the more detailed reports.

This collaborative flow ensures that no matter where a vulnerability is first spotted, the report finds its way to the manufacturer who must take official action.

This structure transforms your supply chain from a simple sales channel into a powerful compliance network. Each partner has a defined role in funnelling security information back to you, the manufacturer, who is ultimately accountable for official reporting.

Understanding these interconnected duties is fundamental. For a deeper look into what this means for your specific role, you can learn more about the complete list of CRA manufacturer obligations in our dedicated guide.

Your Partners Are Your First Line of Defence

This system highlights a crucial strategic point: you have to enable your partners to help you. Your importers and distributors need clear, simple channels to report issues they find.

If they don’t know how to reach you or the process is a bureaucratic nightmare, critical information will get lost. That puts you directly at risk of non-compliance.

For example, a distributor who receives a vulnerability report from a customer should be able to find your dedicated security contact email (security@your-company.com) directly on your website or in their partner documentation within minutes. If they have to spend hours searching, you lose precious time and risk a compliance failure.

By fostering this collaborative ecosystem, you not only meet legal requirements but also strengthen the overall security of your products on the market.

Building Your Compliant Reporting Process Step By Step

Turning legal text into a repeatable, day-to-day workflow is the heart of sustainable compliance. To meet the CRA reporting obligations under Article 14, you can’t just rely on good intentions. You need a structured, documented process that turns the potential chaos of incident response into a predictable, auditable system.

It all starts with clear ownership and a central hub for all vulnerability-related activities. This is precisely the job of a Product Security Incident Response Team (PSIRT). Whether you build a new team or empower an existing one, their mission is to manage every vulnerability from the moment it’s discovered until it’s fully remediated.

Establish Your Incident Response Framework

First things first: you need a formal incident response plan. Think of this as your playbook for when a potential security issue lands on your desk. It ensures everyone knows their role and what to do next, eliminating high-pressure guesswork.

Your plan should map out the entire process, from start to finish:

- Intake: How do you actually receive vulnerability reports from inside and outside the company?

- Triage: What’s the process for validating a report and gauging its severity?

- Investigation: Who is responsible for the deep technical analysis of the vulnerability?

- Reporting: What are the exact steps for notifying ENISA and the relevant CSIRTs within that tight 24-hour window?

- Remediation: How do you develop, test, and ship a security patch to your customers?

A solid incident response plan isn’t just a compliance checkbox; it’s a resilience-builder. It means that when a real threat emerges, your team can execute a coordinated, calm response instead of panicking. That protects your customers and, just as importantly, your brand’s reputation.

A crucial, and often overlooked, part of credible CRA reporting is a strong focus on managing data quality for every piece of information involved. Every report, assessment, and remediation log must be accurate and consistent if it’s going to withstand scrutiny. This discipline ensures the information you submit to regulators is both credible and defensible.

Set Up Secure and Clear Reporting Channels

You can’t fix vulnerabilities you don’t know about. That’s why making it incredibly easy for security researchers, partners, and even customers to report issues is non-negotiable. This means setting up dedicated, secure channels that are simple to find and use.

For instance, a common best practice is to establish a well-publicised point of contact. This usually includes:

- A Dedicated Email Address: A simple, memorable address like

security@yourcompany.comis the industry standard. This inbox needs to be constantly monitored by your PSIRT. - A Web Form: A structured form on your website can guide reporters to provide the essential information right away, like the affected product, version, and a technical description of the flaw.

This infographic shows how information flows from researchers and distributors to you, the manufacturer. It really highlights why those clear intake channels are so important.

As you can see, you are the central point for processing vulnerability information before it triggers an official CRA reporting obligation under Article 14.

Integrate and Automate Your Workflow

Once a report comes in, it must enter a documented workflow. Let’s be honest: tracking vulnerabilities in spreadsheets is a recipe for disaster, especially when you’re up against tight deadlines. Integrating your reporting channels with an internal ticketing system like Jira or ServiceNow is a total game-changer.

This integration instantly turns a simple email into a trackable, auditable record. For example, you can create an automation rule where any email sent to security@yourcompany.com automatically generates a new high-priority ticket in your PSIRT’s Jira project. This immediately assigns the issue, starts the clock on your internal SLAs, and establishes a single source of truth for the entire incident.

This isn’t just about making your response more efficient; it’s about building a crucial evidence trail. You’ll need this documentation to prove your due diligence, a topic we cover in more detail in our guide on creating CRA technical documentation: https://goregulus.com/cra-documentation/technical-documentation/

Don’t forget, these processes need to be ready soon. The reporting rules kick in on September 11, 2026, with full CRA implementation required by December 11, 2027.

Mastering Vulnerability Documentation and Management

Solid documentation isn’t a bureaucratic chore. It’s your best defence during a market surveillance audit and a crucial tool for meeting the tight deadlines of the CRA’s reporting obligations under Article 14. Good records turn the frantic 24-hour reporting window from a mad dash into a manageable, well-documented process.

Without a robust documentation system, proving your due diligence is nearly impossible. When auditors ask how you handled a specific vulnerability, a vague “we think we fixed it” won’t cut it. You need a complete, timestamped audit trail that shows exactly what happened, when it happened, and why you made the decisions you did.

Structuring Your Technical Documentation for Audits

The CRA is specific about what your technical documentation needs to contain, laying it all out in Annex VII. A critical piece is a detailed account of your vulnerability handling procedures. This isn’t just a brief mention; it must be a thorough explanation of your end-to-end process.

As you build out your reporting process, it’s essential to follow established document management best practices. This ensures your records are consistent, accessible, and ready for an audit. Your technical file must explicitly include:

- A Published Vulnerability Disclosure Policy (VDP): This is your public-facing document that tells security researchers how to report vulnerabilities to you securely. It builds trust and creates a clear intake channel.

- Internal Vulnerability Handling Procedures: This is the detailed playbook for your PSIRT, covering everything from the initial triage of a report to the final deployment of a patch.

- The Software Bill of Materials (SBOM): This is a complete inventory of every single component in your product, including all the open-source libraries. An SBOM is non-negotiable for quickly figuring out which products are affected when a new component vulnerability is discovered.

To help you get organised, here is a checklist of the essential documentation you’ll need to have in place to satisfy the CRA’s requirements under Article 14.

Essential Documentation For Article 14 Compliance

| Document/Policy | Purpose | Key Elements To Include |

|---|---|---|

| Vulnerability Disclosure Policy (VDP) | Provides a public, secure channel for security researchers to report vulnerabilities. | Contact details (security@ email, web form), scope of a 'safe harbour' policy, what to report, expected response times. |

| Internal Vulnerability Handling Procedure | Defines the end-to-end internal process for managing vulnerabilities from receipt to resolution. | Roles and responsibilities (PSIRT), triage process, severity scoring criteria (e.g., CVSS), remediation timelines, internal/external communication plan. |

| Software Bill of Materials (SBOM) | Creates a complete inventory of all software components, libraries, and dependencies in a product. | Component name, version, supplier, licence information, unique identifiers (e.g., PURL, CPE). |

| Internal Vulnerability Database | Acts as a single source of truth and an audit trail for all identified vulnerabilities. | Unique vulnerability ID, reporter source, dates (reported, triaged, fixed), severity score, affected products/versions, remediation status, links to advisories. |

| Security Advisory Templates | Standardises communication to customers about fixed vulnerabilities and available updates. | Affected product(s), vulnerability description, severity, potential impact, fix/mitigation details, and where to get the update. |

Having these documents defined, maintained, and accessible is the key to turning a reactive, chaotic process into a structured and defensible compliance strategy.

Maintaining an Internal Vulnerability Database

At the very heart of your documentation strategy must be an internal vulnerability database. This is your single source of truth for every security issue ever reported or discovered in your products. For any IoT vendor or software manufacturer, this isn’t just a nice-to-have; it’s the bedrock of effective product security lifecycle management.

This database—whether it’s a dedicated platform or a well-configured Jira project—needs to track each vulnerability’s entire journey from start to finish.

Key Insight: Your vulnerability database tells the story of your security efforts. It shows regulators exactly how you identify, assess, and resolve threats, providing concrete proof that you are meeting your CRA reporting obligations under Article 14.

Let’s walk through a practical example. A security researcher reports a flaw in your smart lock’s firmware. Here’s how that vulnerability would move through your database:

- Initial Entry: The report is logged with a unique ID, a timestamp, and the reporter’s details. Its status is immediately set to “New.”

- Triage & Assessment: Your PSIRT gets to work and validates the report. They assign it a CVSS (Common Vulnerability Scoring System) score to quantify its severity. A flaw allowing a remote attacker to unlock the door would likely get a 9.8 (Critical).

- Remediation Plan: The ticket is assigned to the right engineering team. They outline a plan to fix the bug and estimate a timeline for developing, testing, and releasing a patch.

- Resolution: The patched firmware is released. The database entry is updated to “Resolved,” with direct links to the new firmware version and the security advisory you sent to customers.

This detailed, step-by-step record is precisely what makes the 24-hour reporting requirement achievable. When you learn an issue is being actively exploited, you aren’t starting from zero. You simply pull up the existing record, which already contains most of the information you need for your initial notification to ENISA. For a deeper dive into the whole process, our guide on CRA vulnerability handling breaks it down even further. By treating documentation as a strategic asset, you build both resilience and confidence in your compliance.

Your Top Questions About Article 14 Answered

Even after you get a handle on the rules, the practical side of the Cyber Resilience Act can throw up some tricky questions. The CRA reporting obligations under Article 14, in particular, create new pressures and responsibilities. It’s no surprise that managers and engineers are worried about how this plays out in the real world.

Let’s tackle some of the most common questions we hear, breaking down the nuances to help you build confidence in your compliance plan.

What If a Vulnerability Is Discovered But Not Yet Exploited?

This is one of the most important distinctions you’ll need to make, and getting it right is fundamental. The 24-hour reporting clock under Article 14 does not start ticking the moment you find a new bug. The CRA is crystal clear on this: the urgent reporting obligation is triggered only for an actively exploited vulnerability.

This means you need credible evidence that attackers are actually using the flaw against systems in the wild. A theoretical weakness found by your internal security team or a bug reported by a researcher doesn’t automatically trigger a report to ENISA.

Key Insight: The threshold for official reporting is active exploitation, not just discovery. Until you have credible evidence of exploitation, your responsibility is to focus on your internal assessment, triage, and patching process.

For instance, let’s say your QA team uncovers a serious SQL injection flaw in your cloud-connected industrial controller during routine testing. It’s a severe vulnerability, but as far as you know, it’s not being exploited.

At this point, your duties are to:

- Assess the Risk: Use a framework like the Common Vulnerability Scoring System (CVSS) to figure out its severity. A flaw like this would almost certainly get a Critical score.

- Prioritise a Fix: Your engineering team needs to start working on a patch straight away.

- Monitor for Exploitation: Your security team should be actively hunting for any signs that this vulnerability is being used by attackers.

Your own vulnerability management process takes priority here. It’s only if you later find evidence of active exploitation—like logs showing an attacker using that exact SQL injection to breach a customer’s network—that your CRA reporting obligations under Article 14 would kick in.

Does Reporting to ENISA Mean a Flaw Becomes Public Knowledge?

This is a huge concern for manufacturers. The fear is that reporting a vulnerability will immediately spark negative press, customer panic, and a hit to your reputation. Fortunately, the CRA was designed with confidentiality measures to prevent exactly that.

When you send a report to ENISA and the national CSIRTs, it isn’t broadcast to the world. The information is handled within a trusted network of cybersecurity authorities. Their primary goal is to protect the single market, not to name and shame manufacturers.

The entire process is built around the principles of Coordinated Vulnerability Disclosure (CVD). In simple terms, this means the authorities work with you to manage how and when information is released.

Let’s walk through a practical example. Imagine you manufacture a point-of-sale (POS) terminal and you report an actively exploited vulnerability that could leak transaction data.

- The initial report you send to ENISA is confidential.

- ENISA and the CSIRTs use this information to assess the risk across the entire market. They might, for example, quietly warn financial institutions to monitor for suspicious activity without ever naming your product.

- This buys you a critical window to develop and roll out a security patch.

- The vulnerability is typically made public only after a fix is available and you’re ready to inform your customers, often through a joint advisory.

This collaborative model protects the market from the immediate threat while giving you the time to fix the problem responsibly. It prevents a premature disclosure that would only help attackers.

Who Is Responsible for Reporting Flaws in Open-Source Software?

The use of open-source software (OSS) is everywhere, but it’s a common source of confusion when it comes to accountability. The CRA is unambiguous here: the ultimate responsibility for the security of the final product lies with the manufacturer.

If your product uses an open-source component with a vulnerability, you are responsible for it as if it were your own code. You can’t just point the finger at the open-source project and consider your job done.

This is where having a complete Software Bill of Materials (SBOM) becomes an absolute must. When a vulnerability like Log4Shell hits the headlines, your SBOM should allow you to instantly see if your products are affected.

Here are the practical steps you have to take:

- Track and Monitor: Continuously watch all OSS components in your products for newly disclosed vulnerabilities.

- Report Upstream: If you discover a brand-new flaw in an OSS component, you have a duty to report it to the project’s maintainers so they can fix it for the entire community.

- Manage and Mitigate: You must assess how the vulnerability impacts your specific product. If it’s being actively exploited, you have to fulfil your own CRA reporting obligations under Article 14.

- Patch Your Product: You are responsible for integrating the fixed OSS component into your product and shipping an update to your customers.

For example, if your smart TV uses a vulnerable open-source media library and attackers are actively exploiting it, it is you—the TV manufacturer—who must submit the 24-hour report to ENISA. You also have to release a firmware update with the patched library. Simply waiting for the OSS project to act is not an option.

Navigating the complexities of the Cyber Resilience Act can be daunting, but you don’t have to do it alone. Regulus provides a unified software platform that simplifies every step of the CRA compliance process. From applicability assessments and requirements mapping to documentation templates and vulnerability management guidance, Regulus turns regulatory hurdles into a clear, actionable plan. Gain clarity and confidence on your path to EU market readiness by exploring our solution at https://goregulus.com.