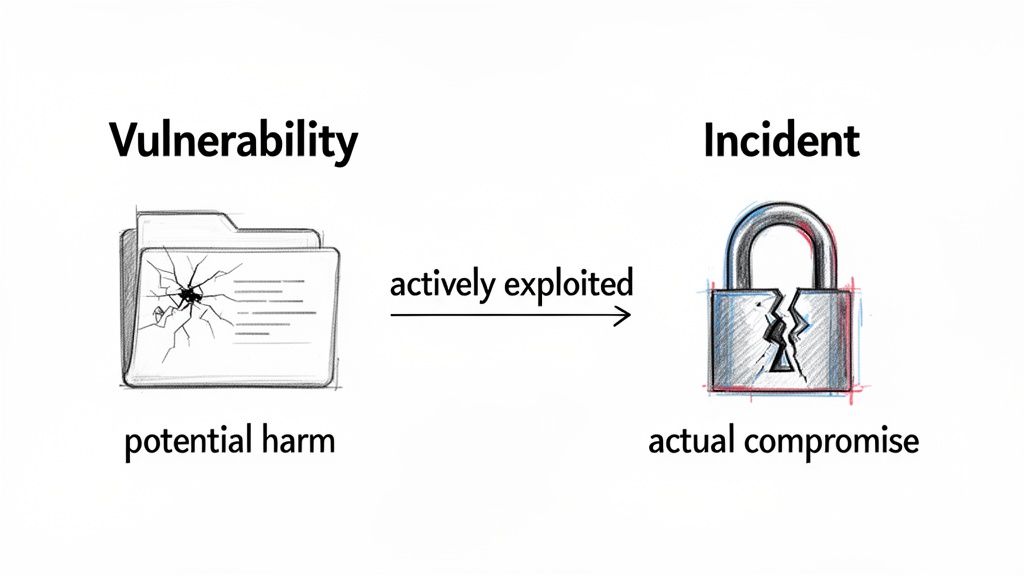

Under the Cyber Resilience Act (CRA), the core difference between a vulnerability and an incident boils down to potential versus actual harm. A vulnerability is a security flaw that could be exploited, representing a potential risk. An incident, on the other hand, is a security event that has actually compromised your product.

Decoding the CRA’s Definitions: Incident vs Vulnerability

The Cyber Resilience Act draws a precise, legally-binding line between a vulnerability and an incident. For any manufacturer of digital products, grasping this distinction isn’t just important—it’s the first and most critical step towards compliance.

To put it simply, a vulnerability is a weakness in your code or system. An incident is a successful breach that may or may not have used that weakness to get in. For example, finding a flaw in your smart lock’s software that could theoretically allow a bypass is a vulnerability. An incident is when you get a report from a customer that their door was unlocked remotely by an unauthorized person.

This distinction directly shapes your response and reporting obligations. The CRA mandates different actions depending on whether you’re dealing with a theoretical flaw or an active security failure. Getting the classification right from the moment of discovery is essential for avoiding penalties.

Key Distinctions and Triggers

A crucial sub-category the CRA introduces is the ‘actively exploited vulnerability’. This isn’t just any flaw; it’s a known weakness that attackers are confirmed to be using in the wild. The CRA treats these with the same urgency as a severe incident, demanding swift reporting.

This dual-reporting framework is designed to force cybersecurity transparency, which the European Commission found was a major gap. To close it, the CRA requires manufacturers to report actively exploited vulnerabilities to both their national CSIRT and ENISA within 24 hours. Severe incidents, defined as those with a negative impact on a product’s security, follow a similar tight deadline. You can explore more about these reporting duties in a detailed CRA overview on taylorwessing.com.

Let’s take a practical example. Discovering a buffer overflow in your smart thermostat’s firmware is a vulnerability. However, once you receive credible threat intelligence that a botnet is using that specific flaw to compromise thermostats, it becomes a reportable, actively exploited vulnerability. If that compromise then leads to a mass shutdown of devices, it escalates into a reportable incident.

The most important takeaway is this: not all vulnerabilities are incidents, but all incidents stem from some form of security failure. The CRA’s focus is on the active exploitation of a vulnerability and the actual impact of an incident.

To help your teams quickly differentiate between these critical concepts, the table below offers a clear, side-by-side comparison.

Quick Guide: Incident vs Vulnerability Under the CRA

This summary table contrasts the core definitions, primary triggers, and required initial actions for incidents and vulnerabilities as defined by the Cyber Resilience Act.

| Attribute | CRA Vulnerability | CRA Incident |

|---|---|---|

| Definition | A weakness in a product that can be exploited by a threat. Example: An SQL injection flaw in your web portal's login page. | An event that negatively impacts the security of a product. Example: An attacker uses the SQL injection flaw to steal customer data. |

| Primary Trigger | Discovery that a flaw is being actively exploited in the wild. | A security compromise with a demonstrable adverse effect. |

| Initial Action | Triage, assess for active exploitation, and prepare for reporting if exploited. | Contain the breach, assess the impact, and begin the reporting process. |

Using this clear distinction ensures your response is not only technically sound but also fully compliant with the CRA’s strict notification timelines and procedures.

What Qualifies as a Reportable Vulnerability Under the CRA

The Cyber Resilience Act makes a crucial distinction that every manufacturer needs to understand: not every security flaw requires an immediate report. The regulation zeroes in on one specific type that demands urgent action—the actively exploited vulnerability.

This shifts the conversation from theoretical bugs to real-world threats. A reportable vulnerability isn’t just a potential weakness in your code; it’s a confirmed flaw that you know attackers are using in the wild. This is a game-changer. The 24-hour reporting clock doesn’t start the moment your team finds a bug. It starts the second you have knowledge that it’s being exploited.

Identifying Active Exploitation

So, what does “active exploitation” actually look like in practice? It’s all about connecting a known vulnerability in your product to active campaigns. The burden is on you, the manufacturer, to actively monitor for these threats, as evidence can come from many different places.

Practical examples of what qualifies as an actively exploited vulnerability include:

- Public Proof-of-Concept (PoC): An exploit script for a zero-day flaw in your product gets published online, and security researchers quickly confirm it works.

- Threat Intelligence Feeds: Your security provider shares indicators of compromise (IoCs)—like malicious IP addresses or file hashes—that directly match a flaw you’ve identified in your device’s firmware.

- Customer Reports: A customer reports strange activity, and your investigation confirms their device was breached using a specific, known vulnerability.

- Dark Web Chatter: You discover credible discussions on a dark web forum where attackers are trading a working exploit for your IoT camera’s remote access protocol.

Even a bug you discover internally through a penetration test or a bug bounty programme becomes reportable the instant you find evidence of its exploitation elsewhere. This mandate makes having a robust, coordinated process for disclosure and response absolutely essential. You can learn more about building these workflows in our guide on CRA vulnerability handling.

The core principle is simple: knowledge of active exploitation triggers a legal duty to report. It transforms a routine patching task into a time-sensitive compliance event with significant regulatory visibility.

The High Stakes of Reporting

The financial penalties for failing to meet the CRA’s reporting requirements are substantial. Fines for serious non-compliance can reach as high as EUR 15 million or 2.5% of a company’s worldwide annual turnover. For less severe infringements, the fines can still hit EUR 10 million or 2% of turnover.

These figures underscore just how seriously regulators are taking this. The ability to distinguish between a standard vulnerability and an actively exploited one is no longer just good practice—it’s a high-stakes requirement. You can find more details on these CRA penalties on cyberresilienceact.eu.

Right, let’s unpack the difference between a security event and something that triggers the CRA’s reporting obligations. It’s a critical distinction that many teams get wrong.

When an Event Becomes a Reportable CRA Incident

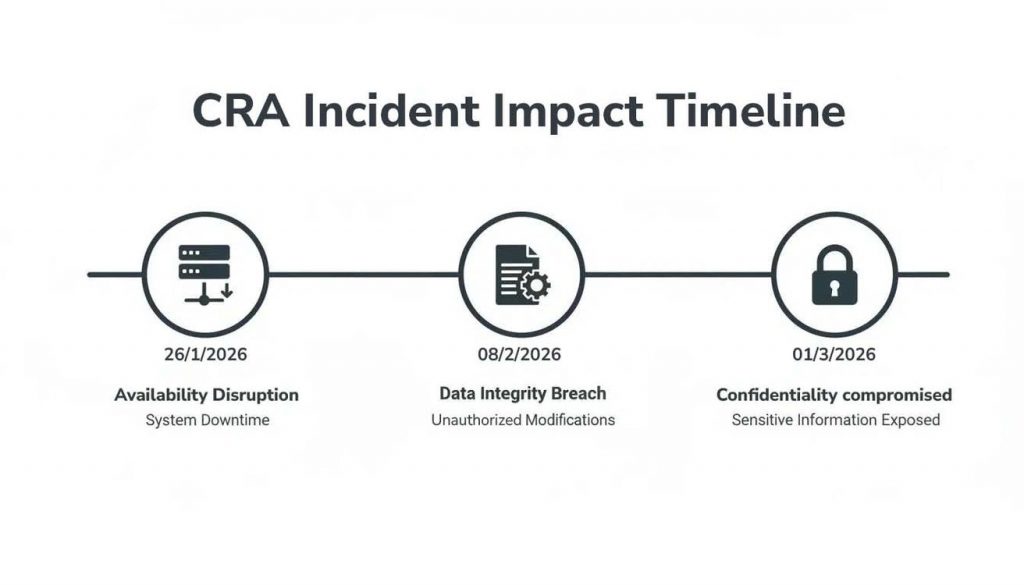

While an actively exploited vulnerability is all about an attacker’s actions, a reportable CRA incident is defined entirely by its impact. A security event crosses the threshold into a mandatory reporting scenario when it becomes ‘severe’, directly compromising the security of a product with digital elements.

The legislation points to adverse effects on the confidentiality, integrity, or availability (CIA) of data or functions. Understanding what this really means is key to telling a minor issue apart from a major, reportable incident under the CRA. The core question you must ask is: did the event negatively impact the product’s ability to protect its data and core functions?

Translating Impact into Real-World Scenarios

To get out of the legal jargon and into the real world, let’s map these concepts to situations you might actually face. Each of these examples would almost certainly trigger the CRA’s 24-hour incident reporting clock because they represent a severe and demonstrable impact.

- Impact on Availability: A successful DDoS attack targets a fleet of smart medical infusion pumps, taking them offline. This prevents hospitals from administering medication. The product is no longer available for its intended, critical function.

- Impact on Integrity: Malicious actors push an unauthorised firmware update to a line of connected vehicles. This update changes the braking system’s behaviour, making the cars unsafe. The integrity of the product’s core safety function has been compromised.

- Impact on Confidentiality: A data breach at a cloud service connected to a popular home security camera system exposes user login credentials and private video feeds. This is a severe breach of data confidentiality.

A critical insight here is that a severe incident can happen even without a vulnerability in your product. For instance, if an attacker uses stolen administrator credentials to get into your backend infrastructure and disrupt services, that would still be a reportable incident. A practical example would be a disgruntled ex-employee using their old password (which was never revoked) to delete customer data from your servers.

The Source of the Incident Matters Less Than the Impact

It is absolutely essential to realise that the origin of the incident is secondary to its effect. Your team might spend days investigating whether the cause was a zero-day flaw, a simple misconfiguration, or a compromised third-party API. However, the CRA reporting clock starts ticking the moment you become aware of the severe impact itself.

This intense focus on impact means manufacturers must establish clear internal criteria for what constitutes a ‘severe’ event. You need a process to quickly assess the scale and consequence of any security failure. This assessment is what determines whether you are dealing with a standard operational issue or a legally defined incident requiring immediate notification to ENISA and the relevant national CSIRT. To help with compliance, it is wise to familiarise yourself with resources like the national vulnerability database and other key regulatory tools.

Mastering CRA Reporting Timelines and Procedures

The Cyber Resilience Act imposes strict, unforgiving reporting deadlines. For manufacturers, this means operational readiness isn’t optional—it’s mandatory. The distinction between an incident and a vulnerability is critical here, as each triggers a similar but distinct reporting timeline that you must be prepared to follow.

When your organisation becomes aware of either a severe incident or an actively exploited vulnerability, the clock starts. These tight timeframes are no accident; they reflect the EU’s view that the window to contain modern cyber threats is shrinking, demanding immediate and decisive action.

Critical Reporting Deadlines

The CRA framework is built on clear deadlines that dictate specific actions at set intervals. If you discover an actively exploited vulnerability, you must issue an early warning to ENISA within 24 hours. This is followed by a more detailed notification within 72 hours and a final report within 14 days.

Severe incidents have their own track. An initial notification is also due within 24 hours, with an incident report to follow within 72 hours. However, the final, comprehensive report for a severe incident isn’t required until one month after you first become aware of it. You can learn more about how these EU cyber policies impact reporting on centerforcybersecuritypolicy.org.

The image below shows the kinds of impacts that trigger the severe incident timeline, such as disruptions to availability, integrity, or confidentiality.

This makes it clear: any event that causes a significant disruption to your product’s security functions kicks off a mandatory reporting sequence.

What to Include at Each Stage

Meeting the deadlines is one thing, but knowing what information ENISA and the national CSIRT expect is another. Your reports need to evolve at each stage.

- 24-Hour Alert: Think of this as a quick “heads-up.” It should identify the affected product and the basic nature of the threat (e.g., “actively exploited RCE vulnerability in Product X” or “severe service availability incident affecting Platform Y”). A practical example: “We have confirmed an actively exploited Remote Code Execution (RCE) vulnerability, CVE-2026-12345, in the firmware of our ‘SmartHome Hub 3.0’ device.”

- 72-Hour Update: Now you need to add more substance. Provide your initial findings on the root cause, assess the potential impact, and detail the mitigation steps you’ve already taken or have planned. For instance: “The root cause is a buffer overflow in the device’s web server. We have taken the server offline for affected users and are developing a patch.”

- Final Report: This is your full post-mortem. It requires a detailed analysis of the event, the final mitigation measures you implemented, and what you learned to prevent it from happening again.

Establishing a “war room” protocol is essential for success. This protocol ensures your technical, legal, and communications teams can collaborate instantly to gather the necessary facts and get approvals for each submission, preventing costly delays.

To make this manageable under pressure, organisations should prepare pre-approved templates for each reporting stage. Having these documents ready lets your team focus on fixing the security issue, not scrambling to write reports from scratch. If you need more details, read also about CRA reporting obligations under Article 14.

Building Your Incident and Vulnerability Response Workflows

To put the Cyber Resilience Act into practice, you need two separate but interconnected workflows. The whole game is about knowing what triggers each one. Your vulnerability workflow is for potential threats, while the incident workflow kicks in only when a security failure has already happened.

Getting this separation right is crucial for a solid CRA incident vs vulnerability definition in your day-to-day operations.

For a strong foundation, it’s worth understanding the fundamentals of Crafting Your Incident Response Plan. This sets the stage for the specific, high-stakes demands of the CRA.

The Vulnerability Management Workflow

Your vulnerability management process can’t be a one-time project; it has to be a continuous cycle. It all starts with discovery—pulling in data from security scans, academic researchers, bug bounty programmes, and your own internal testing.

But the CRA slots in a critical new step. You must have a formal process for continuous monitoring to check if any vulnerability you’ve found is being actively exploited in the wild. This is the specific trigger that starts the CRA’s notorious 24-hour reporting clock for a vulnerability.

A practical vulnerability workflow checklist should look something like this:

- Discovery: A new vulnerability is identified, no matter the source. Example: A researcher reports a cross-site scripting (XSS) flaw in your product’s web dashboard via your bug bounty program.

- Triage: You assess its severity (using CVSS, for example) and the potential impact on your business and customers. Example: You rate the XSS flaw as ‘High’ severity (CVSS 7.5).

- Monitor for Exploitation: Your team actively scours threat intelligence feeds and public sources, looking for any sign of active exploitation.

- Report (if exploited): If you confirm active exploitation, you immediately trigger the 24-hour reporting process to ENISA.

- Remediate: You develop, test, and deploy a patch to fix the flaw without undue delay—and you do this regardless of its exploitation status.

The Incident Response Workflow

The incident response workflow, on the other hand, is purely reactive. It’s triggered by a security failure that causes a severe impact. While your technical teams are scrambling to contain, eradicate, and recover, the CRA requires a parallel compliance track that simply cannot be an afterthought.

From the very moment a severe incident is suspected, your compliance activities have to begin. Your legal and communications teams are no longer secondary responders; they are now part of the immediate first-step protocol to meet CRA obligations.

An effective incident response checklist under the CRA must bake in compliance from the very beginning:

- Detection & Initial Analysis: An alert is triggered, maybe a widespread service outage. Your first job is to confirm it’s a security incident. Example: Your monitoring system alerts that 50% of your European smart plugs are offline.

- Immediate Notification & Containment:

- Notify the legal team to start a CRA impact assessment.

- Engage your pre-assigned communications team.

- Start technical containment to stop the breach from spreading. Example: You block the attacking IP addresses at the firewall.

- Reporting: Based on the legal team’s assessment of a ‘severe incident’, you submit the 24-hour alert to ENISA and the relevant CSIRT.

- Eradication & Recovery: Your technical team removes the threat from your systems and gets normal operations back online.

- Follow-up Reporting: You submit the required 72-hour and final reports to close the loop.

Building these two distinct workflows ensures you can manage both potential and actual threats effectively, keeping you on the right side of the regulation. To learn more about your specific duties, you can read our detailed article on CRA manufacturer obligations.

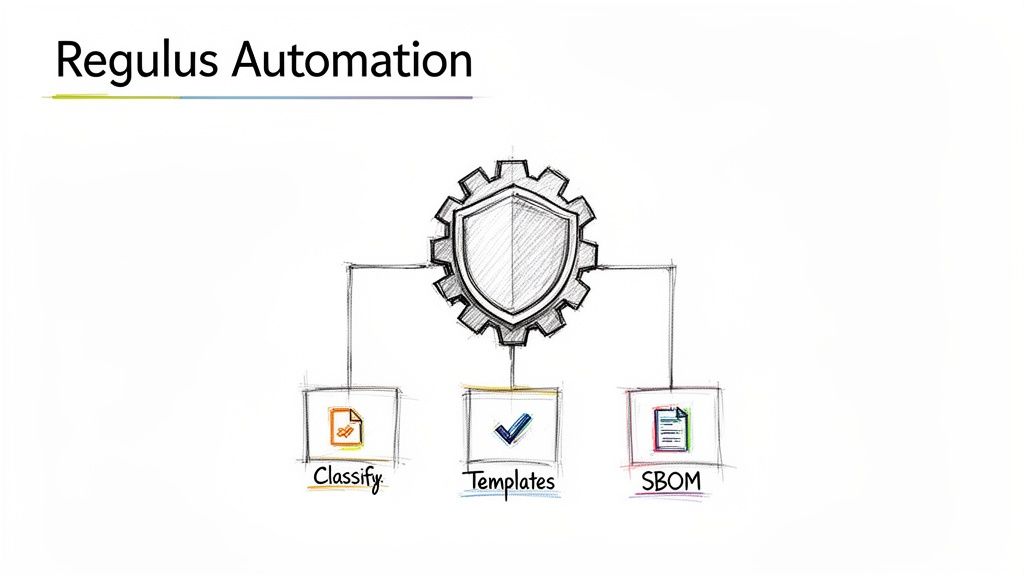

How Regulus Automates Your CRA Reporting and Compliance

Meeting the Cyber Resilience Act’s demanding timelines and nuanced definitions is a major challenge for any manufacturer. Trying to manually track obligations, classify events, and prepare reports under pressure is a recipe for error. This is where a specialised platform like Regulus comes in, turning compliance from a frantic, manual burden into an automated, auditable process.

The platform has built-in guidance to help your teams correctly classify any finding. It removes the ambiguity, making sure you can confidently tell the difference between a standard bug, a reportable ‘actively exploited vulnerability’, or a ‘severe incident’. This is a critical part of operationalising the CRA incident vs vulnerability definition within your security programme.

Accelerated Reporting and Clear Roadmaps

To hit the aggressive reporting deadlines, Regulus provides pre-built templates for the 24-hour, 72-hour, and final submissions. These templates are already structured with the required fields for ENISA and national CSIRTs, which dramatically cuts down the time your team spends on documentation. Having well-defined Incident Management Procedures is essential, and our templates help structure that response when it matters most.

For instance, the moment an actively exploited vulnerability is confirmed, your team can instantly generate the 24-hour alert. The template guides them to input the essential details—like the product affected and the nature of the flaw—without having to dig through dense regulation text. This ensures a fast, compliant initial response every single time.

Regulus maps your product’s classification directly to its specific post-market surveillance duties. This provides your team with a clear, actionable roadmap for monitoring, detection, and reporting that aligns precisely with your legal obligations.

This automated mapping gives you a clear path to compliance. If your smart home device is classified as ‘Important’, the platform automatically outlines the heightened monitoring and documentation duties required. Instead of deciphering legal jargon, your team gets a clear, actionable checklist.

This transforms complex regulatory requirements into a straightforward, manageable workflow. It’s a structured approach that moves your organisation beyond reactive compliance and towards genuine security by design.

Frequently Asked Questions About CRA Compliance

Once you get past the high-level requirements of the Cyber Resilience Act, the real, practical questions start to surface. Here are a few common scenarios we see and how to handle them correctly to maintain compliance.

When Does the 24-Hour Clock Start

Let’s clear up a common point of confusion: the 24-hour reporting clock for vulnerabilities. Does it start ticking the moment your internal team discovers a new flaw?

No, it doesn’t. The CRA’s urgent reporting duty is tied specifically to vulnerabilities that you know are being ‘actively exploited’ out in the wild.

Practical Example: Your penetration testing team finds a critical vulnerability on Monday. You begin work on a patch. On Wednesday, you get a report from a threat intelligence firm that an attacker group is now using that exact vulnerability to target companies. The 24-hour clock starts on Wednesday, the moment you became aware of active exploitation.

That doesn’t mean you can just sit on it, though. You are still obligated under the CRA to fix all identified vulnerabilities without ‘undue delay.’ Your internal triage process should prioritise the fix based on its potential severity, but that urgent reporting clock only starts with active exploitation.

Can a Single Event Be Both a Vulnerability and an Incident

Yes, and your response workflows absolutely must account for this overlap. A single event can easily trigger both reporting requirements.

Imagine a flaw in your product is discovered to be ‘actively exploited’. That immediately starts the clock on the vulnerability reporting track. But if that same exploitation results in a ‘severe incident’—like a wide-scale service outage or a major data breach—it also kicks off the separate incident reporting process. You’ll then be managing both tracks in parallel, each with its own deadlines and reporting details.

This distinction is a core part of the CRA incident vs vulnerability definition. The discovery of active exploitation starts one clock; the resulting severe impact starts another. Your teams have to be ready to run both processes at the same time.

Who Reports on Open-Source Flaws

You do. The CRA is crystal clear: the responsibility lies with the manufacturer placing the product on the EU market.

If a third-party or open-source library buried in your product has an actively exploited vulnerability, you are the one legally obligated to report it to ENISA. This is exactly why maintaining a complete Software Bill of Materials (SBOM) and continuously monitoring your dependencies has become a non-negotiable part of CRA compliance.

Practical Example: Your connected doorbell uses an open-source library for video streaming. A critical, actively exploited vulnerability (like Log4Shell) is announced in that library. Even though you didn’t write the vulnerable code, you are the manufacturer of the doorbell. Therefore, you are responsible for reporting the exploited vulnerability to ENISA and issuing a patch for your product.

Navigating these complex requirements demands clarity and automation. Regulus provides a step-by-step roadmap to prepare for the CRA, with built-in guidance, product classification, and reporting templates to ensure your teams are always ready. Gain confidence in your compliance strategy by visiting https://goregulus.com.