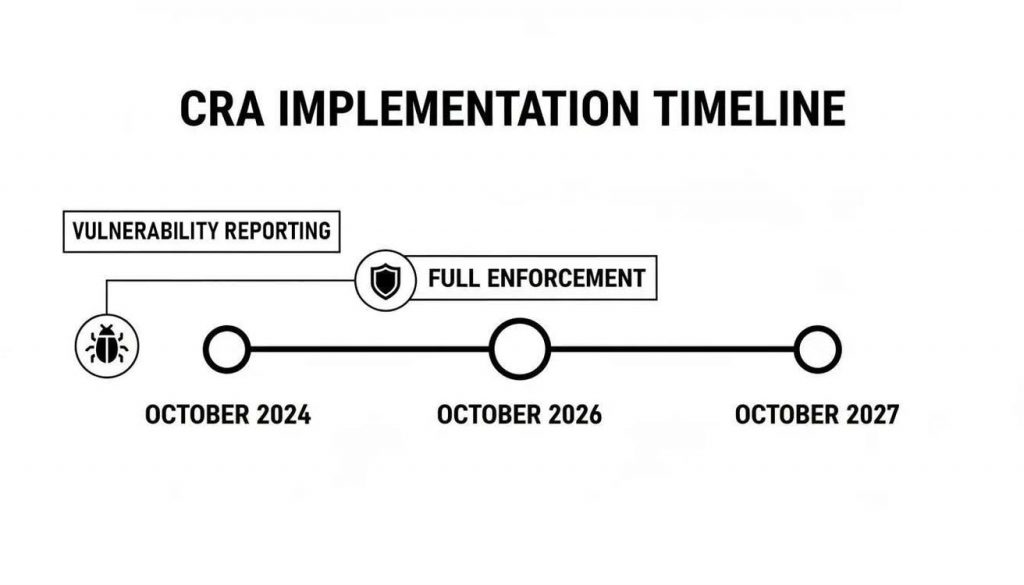

The European Commission’s Cyber Resilience Act (CRA) has moved from theory to reality for manufacturers. With the official implementation guidance now published, there’s a phased timeline mapping out the path to compliance. Key obligations, like vulnerability reporting, are set to kick in as early as 2026, with full enforcement landing in late 2027.

Decoding the Official CRA Implementation Timeline

Now that the European Commission’s Cyber Resilience Act is in force, the first job for any manufacturer, software developer, or IoT vendor is to get to grips with the implementation timeline. These aren’t just dates on a calendar; they are hard milestones that will determine whether your products can be sold in the EU. If you fail to get ready, you risk being shut out of one of the world’s biggest markets.

The good news is that the timeline is designed to give you a window to adapt. It’s not a sudden cliff edge but a progressive rollout of obligations, allowing you to build a solid compliance framework without having to tear up your existing product development and operational workflows.

Key Dates and Their Impact

The period between now and late 2027 is your preparation window. The European Commission has laid out the CRA implementation timeline to give manufacturers and IoT vendors a reasonable runway before full enforcement. The most important deadlines are packed into 2026 and 2027, leading up to the full application of the Act on 11 December 2027. This 36-month grace period from its entry into force gives your organisation the adjustment time it needs to run applicability checks, map requirements, and build out your technical files.

The timeline below cuts through the noise and shows the two milestones that should be anchoring your roadmap right now: vulnerability reporting and full enforcement.

It’s pretty clear that while full enforcement seems a way off in late 2027, your first compliance actions around vulnerability management need to be locked in much sooner.

Key CRA Compliance Milestones 2026-2027

The phased timeline sets clear expectations for manufacturers. This table summarises the critical deadlines you need to factor into your internal project plans between now and 2027.

| Milestone | Deadline | Key Action Required for Manufacturers |

|---|---|---|

| Vulnerability Reporting | June 2026 | Establish and document a formal process for receiving, triaging, and reporting vulnerabilities to ENISA within 24 hours of exploitation. |

| Full Enforcement | December 2027 | All in-scope products placed on the market must be fully compliant with all CRA requirements, including conformity assessment and CE marking. |

| Support Period | Ongoing from 2027 | Provide security updates for the product's expected lifetime or a minimum of five years, whichever is shorter. |

These dates aren’t suggestions—they are firm deadlines. Your roadmap must show a credible plan for meeting each one.

Turning Deadlines into Action

The real work is translating these legal dates into practical business actions. For instance, the mandatory vulnerability reporting requirement means your security and product teams need a defined process for receiving, triaging, and reporting security issues to the right authorities well before the deadline hits. A practical example would be a company that manufactures smart locks. Before June 2026, they must have a public-facing security contact point, an internal system to assess bug reports (e.g., using a CVSS framework), and a clear protocol for notifying ENISA within 24 hours if a severe vulnerability is actively being exploited.

The CRA’s phased rollout is a strategic opportunity. Use this period to methodically align your engineering, security, and legal teams, turning compliance from a burdensome cost centre into a competitive advantage built on trust and security.

As you build out your CRA implementation plan, it’s also smart to look at how it intersects with other EU regulations. You’ll often find overlaps with data protection, and a practical AI GDPR compliance guide can provide useful context for making sure your product ticks all the necessary legal boxes.

By mapping these dates to your internal project plans, you can build a manageable path forward. For a more detailed plan, check out our guide on how to build a complete Cyber Resilience Act compliance roadmap. This proactive approach ensures you’re not scrambling to meet deadlines but are instead building a foundation for sustained compliance.

Does the CRA Apply to Your Product Portfolio?

Before your teams spend a single euro on compliance, the first move is to figure out if the Cyber Resilience Act even touches your products. The European Commission’s guidance all comes back to one core concept: “products with digital elements” or PDEs. It’s a deliberately broad term, but a quick, systematic check will tell you where you stand.

A PDE is any software or hardware product—and its remote data processing solutions—that connects directly or indirectly to another device or network. This definition casts a wide net, catching far more than just obvious smart devices. It’s time to move past assumptions and take a hard look at your entire portfolio.

Defining Products with Digital Elements

To make sense of the EU’s guidance, you need to focus on how your products handle data. The key is the “data connection” requirement. This is what separates a simple electrical kettle from a smart kettle that exchanges digitally encoded information with a network or app.

Let’s ground this in some real-world scenarios:

- Smart Thermostat: This is the textbook case. It’s a piece of hardware running firmware that connects to a Wi-Fi network. It takes commands from a mobile app and sends data to a cloud server. Unambiguously a PDE.

- Connected Industrial Sensor: A sensor on a factory floor that monitors temperature and sends that data over a local network to an industrial control system (ICS) is also a PDE. Both the hardware and the software enabling that connection are in scope.

- Commercial Software Library: Here’s where it gets less obvious. If you sell a software development kit (SDK) for processing images that developers integrate into their commercial mobile apps, your SDK is a PDE. Because it’s intended for integration into connected products, it falls under the CRA.

The CRA’s reach isn’t just about finished goods. It extends to the very components and software that make them work. If your product is built to be integrated into another product with digital connectivity, it almost certainly falls within the scope.

This means scrutinising not just what your product does, but what it enables other products to do. For a deeper analysis of the edge cases, our detailed guide on Cyber Resilience Act applicability offers more specific examples.

Navigating Common Grey Areas

The official guidance also helps clear up some common points of confusion, especially around software and older products. Getting these distinctions right is critical for scoping your compliance project accurately from day one.

One of the most debated topics is free and open-source software (FOSS). The CRA is quite clear here: if FOSS is supplied on a non-commercial basis, it’s generally out of scope. For example, a developer maintaining a small open-source logging library in their free time is not covered. However, the moment a company integrates that same open-source component into a commercial product they sell, they, as the manufacturer, become responsible for its security and CRA compliance.

Another frequent question is about legacy products. The rule seems simple: products placed on the EU market before 11 December 2027 are exempt. But there’s a massive catch—the “substantial modification” clause.

So, what counts as a substantial modification?

- Not a Substantial Modification: Releasing a routine security patch to fix a known vulnerability in a smart TV. This is just maintenance.

- A Substantial Modification: Pushing a major software update to that same smart TV that adds new streaming service apps and voice assistant integration. This introduces new functionalities and potential threat vectors, changing the product’s original risk profile.

If a legacy product gets a “substantial modification” after the deadline, it’s instantly treated as a new product. That means it must fully comply with the CRA. This forces engineering and product teams to meticulously document every change and assess its security impact, otherwise you could accidentally trigger a full-blown and expensive compliance obligation.

How to Classify Your Product’s Risk Level

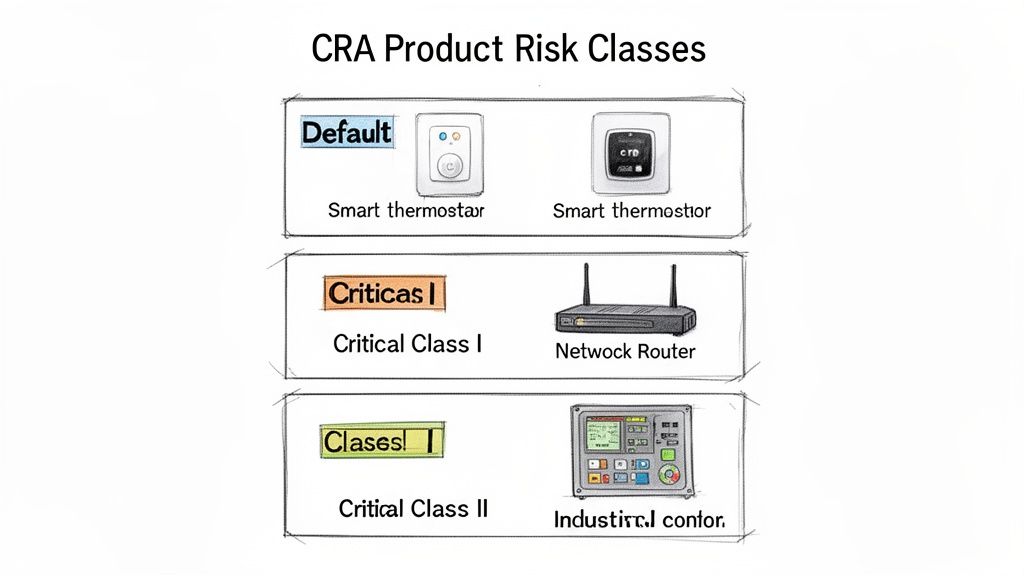

Once you’ve confirmed the Cyber Resilience Act applies to your products, the next job is classifying their risk level. This isn’t just a box-ticking exercise; this decision shapes your entire conformity assessment journey, its complexity, and ultimately, its cost. The CRA implementation guidance from the European Commission lays out three main categories, and getting this classification right is absolutely fundamental.

Get it wrong, and you’re looking at two expensive mistakes. Under-classify, and you create a massive compliance gap, risking market withdrawal and serious penalties. Over-classify, and you saddle your team with unnecessary costs and administrative burdens, like paying for a Notified Body assessment when a self-assessment would have been perfectly fine.

The Default Risk Category

The vast majority of products with digital elements will land in the default category. This is the baseline for products whose failure isn’t expected to cause significant systemic disruption or widespread harm. For these products, the conformity assessment path is the most straightforward.

Think of your typical consumer smart home device—a connected coffee machine, smart lighting, or a smart toy. While a security bug is certainly an issue that needs fixing, it’s unlikely to bring down critical infrastructure or endanger public safety. A bug in a smart speaker might allow someone to play music without permission, which is inconvenient but not catastrophic.

For these default products, manufacturers can perform a self-assessment. This means you internally verify and document that your product meets all the essential requirements in Annex I of the CRA. You get to skip the cost and overhead of involving an external third-party certification body.

Critical Products Class I and Class II

This is where the real complexity starts. The European Commission, in Annex III of the CRA, provides a specific list of product categories that are automatically deemed “critical” and sorted into Class I or Class II. The decision comes down to the product’s core function and its potential impact if it were ever compromised.

A key takeaway from the official guidance is that classification hinges on the product’s “core functionality,” not the risk level of its individual components. A default-risk product that contains a critical component is still treated as a default-risk product.

Class I Critical Products are those performing functions important to cybersecurity, but they aren’t at the very highest level of criticality. For these, your compliance path gets more involved. We have a complete guide on how to conduct a CRA risk assessment that breaks down the specific steps needed here.

Here are a few examples of products that usually fall under Class I:

- Network Equipment: This covers products like routers, modems, and network switches that are central to network management and security.

- Operating Systems: General-purpose operating systems for desktops and servers are in this class because of their central role in any computing environment.

- Password Managers: Any software designed specifically for storing and managing credentials is also considered a Class I critical product.

For these products, you have a choice. You can either follow a harmonised standard and perform a self-assessment, or you must go through a third-party conformity assessment carried out by a Notified Body.

The Highest Risk Level: Class II

Class II Critical Products represent the highest risk category, period. These are products where a failure could lead to severe consequences, hitting critical infrastructure, public safety, or causing major economic disruption.

For instance, an Industrial Control System (ICS) managing a power grid or a railway switching system is a clear-cut Class II product. A compromise there could have immediate, dangerous real-world effects. Likewise, the core hardware security modules (HSMs) used to protect financial transactions or network-connected robots used in surgery also fall into this top tier.

For Class II, there is no option for self-assessment. Mandatory third-party conformity assessment by a Notified Body is the only route. This is a rigorous audit where an independent, government-appointed organisation scrutinises your product and processes to certify they meet the CRA’s strictest requirements. It provides the highest level of assurance but also demands the most significant investment in both time and resources.

Translating CRA Requirements Into Engineering Tasks

The biggest hurdle for most organisations isn’t understanding the Cyber Resilience Act’s legal text; it’s turning it into actual engineering work. This is where the theory stops and the real work begins. We’ll map the essential security requirements from Annex I of the CRA directly onto the development and operational workflows your teams use every day.

Think of this as a practical translator. It takes the high-level legal obligations and turns them into a task-level roadmap. Your product security, engineering, and DevOps teams get a clear set of tickets they can drop right into their backlog and start executing. After all, the European Commission provides the framework, but your technical teams are the ones who have to build it.

From Secure by Default to Developer Checklists

“Secure by default” is a core principle of the CRA, but it’s far too abstract for a developer to act on. You can’t just assign a ticket that says “make it secure.” The real goal is to break this principle down into a concrete checklist that becomes a non-negotiable part of your development process.

Let’s take a connected camera manufacturer as a real-world example. “Secure by default” doesn’t mean much, but these specific engineering tasks do:

- Disable All Non-Essential Ports: When the device comes out of the box, a network scan should only show ports that are absolutely essential for its main function. For instance, only port 443 for HTTPS communication should be open. Any development, testing, or management ports like Telnet (port 23) or FTP (port 21) must be completely disabled in the production firmware.

- Enforce Strong Initial Passwords: The camera can’t ship with default credentials like “admin/admin.” It must force the user to create a unique, complex password (e.g., minimum 12 characters, including upper/lower case, numbers, and symbols) during the initial setup. No exceptions.

- Implement Brute-Force Protection: If someone tries to log in and fails five consecutive times, the device’s login interface has to temporarily lock out that IP address for at least five minutes.

When you break it down like this, you create verifiable tasks. They can be assigned, tracked, and, most importantly, tested. This also generates the exact evidence you’ll need for your technical file later on.

Mapping Vulnerability Handling to Operational Workflows

Another huge part of the CRA is vulnerability handling. The regulation demands a structured process for receiving, fixing, and reporting security flaws. For your teams, this means building an operational workflow that is both robust and repeatable.

A requirement for a ‘vulnerability disclosure policy’ isn’t just about writing a document—it’s about defining a live process. You need a dedicated security contact, a clear system for triaging incoming reports, and established communication channels.

So, how does an obligation to “manage vulnerabilities” look in practice? It becomes a series of well-defined operational steps:

- Establish a Public Security Contact: Create a simple, easy-to-find

security@yourcompany.comemail address and a/securitywebpage. This is where security researchers know exactly where to send their findings. This is your front door. - Develop an Internal Triage System: When a report arrives, it should automatically create a ticket in a project management tool (like Jira or Azure DevOps) and assign it to a security engineer for initial validation within one business day.

- Define Remediation Timelines: Set internal service-level agreements (SLAs) for fixing vulnerabilities based on severity. For a smart thermostat manufacturer, a critical vulnerability allowing remote takeover might demand a patch within 14 days, while a low-risk UI bug can be slated for the next quarterly release cycle.

- Structure the Coordinated Disclosure Process: Once a fix is ready, you need a documented plan. This covers how you notify the original researcher, publish a security advisory with a CVE identifier, and push the update out to customers. This process must be consistent every single time.

For a much deeper look into setting up these processes, our guide on building a CRA secure development lifecycle offers more detailed steps and context.

These workflows aren’t just best practices; they are mandatory requirements under the CRA and will be scrutinised during an audit. Failing to get this right can lead to major compliance failures, even if your product is otherwise secure. This methodical approach is how you turn legal language into engineering reality.

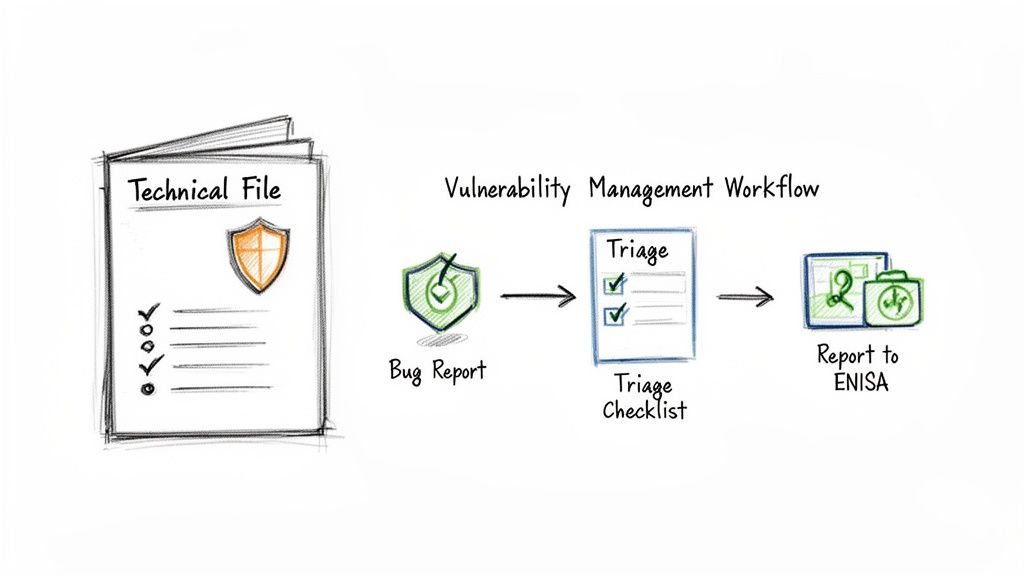

Building Your Technical File and Vulnerability Management Process

The Cyber Resilience Act boils down to two concrete outputs that will become your proof of compliance: a comprehensive Technical File and a systematic vulnerability management process. Think of the Technical File as your product’s compliance biography—a single source of truth that demonstrates how you’ve met every single requirement. Your vulnerability management process, on the other hand, is the living, breathing system that keeps the product secure long after it ships.

These two elements are precisely what market surveillance authorities will ask for during an audit. Without them, even a technically flawless product will fail its CRA assessment. Getting them right isn’t about ticking boxes; it’s about creating a bulletproof, auditable trail that proves you’ve done your due diligence.

Structuring Your CRA Technical File

Annex II of the CRA spells out the mandatory structure for your Technical File. This isn’t a marketing brochure; it’s a detailed, evidence-based dossier. Your entire goal here is to make it incredibly easy for an auditor to connect each legal requirement to a specific piece of evidence in your file.

A practical way to organise this is to create a master document that indexes all the required components. Here’s a blueprint for its structure:

- General Product Description: The product’s name (e.g., “SmartHome Hub Model X1”), version (e.g., Firmware v2.1.3), intended use, and a clear, concise description of its core functionalities.

- Cybersecurity Risk Assessment: The full report detailing how you identified threats (e.g., “Man-in-the-middle attacks on local network”), assessed risks, and chose your mitigation measures (“Implement TLS 1.3 for all communications”).

- List of Applied Standards: A detailed list of every harmonised standard (e.g., “ETSI EN 303 645”), common specification, or other technical solution you used to meet Annex I requirements.

- Software Bill of Materials (SBOM): A complete and accurate inventory of all third-party and open-source components (e.g.,

openssl-3.0.2,mosquitto-2.0.14) baked into your product, generated in a standard format like SPDX or CycloneDX. - Source Code or Documentation: For critical products, you may need to provide a Notified Body with access to source code or extensive technical documentation for review.

- EU Declaration of Conformity: A copy of the formal declaration you sign, attesting to the product’s compliance.

This structure provides a clear, logical narrative for auditors, showing them exactly how your product was designed, developed, and tested with security at its core. To ensure robust cybersecurity and the kind of vulnerability management the CRA requires, it is crucial to understand and implement the latest in API Security Best Practices, as APIs are often a primary attack vector.

Building a Compliant Vulnerability Workflow

Beyond just the documentation, the CRA mandates a robust, active process for handling vulnerabilities. This is much more than just patching bugs as they pop up. It’s about having a documented, repeatable, and timely workflow for every single security report you receive. This process must cover everything from the initial intake to reporting the issue to the EU’s cybersecurity agency, ENISA.

Let’s walk through a tangible scenario. Imagine you’re a manufacturer of a smart home hub, and a security researcher drops a vulnerability report into your inbox.

Your documented workflow should kick in immediately:

- Receipt and Triage: The report lands at your public

security@address. An internal ticket is automatically created and assigned to the product security team. Within 24 hours, the team validates whether the vulnerability is genuine and exploitable. - Severity Assessment: Using a framework like the Common Vulnerability Scoring System (CVSS), the team classifies the bug’s severity. They quickly determine it’s a critical remote code execution flaw, giving it a score of 9.8.

- ENISA Reporting: Because the vulnerability is being actively exploited in the wild, you must notify ENISA within 24 hours of becoming aware of it. You’ll use ENISA’s designated portal to submit an initial report.

- Remediation and Patching: The engineering team gets to work on a patch. For a critical flaw like this, your internal SLA should mandate that a fix is developed and tested within a short timeframe, say 7 days.

- Coordinated Disclosure: Once the patch is ready, you coordinate with the original researcher. You agree on a public disclosure date, publish a clear security advisory on your website, and push the firmware update automatically to all connected hubs.

This entire workflow—from the first email to the final update—must be documented. Timestamps, key decisions, and every communication create the auditable evidence that proves you are meeting your post-market surveillance obligations under the CRA.

The European Commission is still releasing guidance to clarify these obligations. For instance, on 3 March 2026, it unveiled draft guidance to help manufacturers—especially small and medium-sized enterprises—understand their responsibilities. This guidance addresses how to handle legacy products and even allows for cost-saving measures like grouped testing for similar product families, which is a significant relief for teams with limited resources. You can discover more insights about these draft guidelines on Hunton.com. This ongoing support from the commission is vital for navigating the practicalities of CRA implementation.

Of all the questions we see from product teams digging into the Cyber Resilience Act, a few pop up again and again. It’s understandable—the official guidance from the European Commission can be dense, and getting clear answers on these recurring pain points is key to building a compliance plan you can trust.

This section tackles the most frequent queries head-on, giving you practical answers to cut through the complexity and avoid common missteps.

What Happens to Products Already on the Market?

One of the most pressing questions is about legacy products. What happens if your product was already on the EU market before the full application date of 11 December 2027?

The short answer is that these products generally are not subject to the CRA’s requirements. But there’s a huge catch you need to be aware of: the “substantial modification” clause.

The European Commission’s guidance is clear that a substantial modification is any change to software, firmware, or hardware that meaningfully alters the product’s original intended purpose, functionality, or security posture. If a change introduces new threat vectors or materially expands the attack surface, it’s almost certainly substantial.

Let’s look at a practical example to make this concrete:

- Not a Substantial Modification: You find a buffer overflow vulnerability in your smart camera’s firmware. You issue a patch that fixes the bug but adds no new features. This is just routine maintenance and does not drag your legacy product into CRA compliance.

- A Substantial Modification: You decide to update that same camera with a new cloud storage integration and AI-powered person detection. This fundamentally changes the product’s function and introduces new data processing risks and network connections. This is a substantial modification, and the product is now treated as “newly placed on the market,” which means it needs to be fully CRA compliant.

It’s absolutely essential to meticulously document every change made to a legacy product and run a formal impact assessment. This creates an auditable trail that proves why a change was or was not considered substantial, protecting you from accidentally falling into non-compliance.

How the CRA Interacts with Other EU Laws

Another common source of confusion is how the CRA fits in with other major EU regulations like the General Data Protection Regulation (GDPR) and the AI Act. The key is to see them as complementary layers, not competing rules.

The CRA is a horizontal regulation. Think of it as setting a foundational layer of cybersecurity for all products with digital elements. It then works in tandem with other laws to create a more complete regulatory picture.

- With GDPR: The CRA provides the technical “secure by design and by default” foundation for GDPR. For example, if your product is a health-tracking wearable that collects heart rate data (personal data), GDPR requires you to protect that data. The CRA mandates specific technical controls like encryption of data at rest and in transit, which directly fulfills a key GDPR principle.

- With the AI Act: If you’re building a product that’s also an AI system (like an AI-powered medical device), you have to meet the requirements of both. The AI Act will govern the safety and transparency of the AI model itself (e.g., ensuring it is not biased), while the CRA mandates the cybersecurity of the underlying digital product it runs on (e.g., protecting it from being tampered with).

Your conformity assessment has to be consolidated. This means your single EU Declaration of Conformity must list all applicable laws and attest that your product complies with every single one.

Support for Smaller Companies and SMEs

The European Commission’s guidance explicitly acknowledges that this compliance journey can be tough for small and medium-sized enterprises (SMEs). To help, the CRA includes several practical measures designed to lower the barrier to entry for smaller organisations.

One of the most useful concessions is allowing businesses to group similar products into families for conformity testing. For instance, if you produce a line of smart light bulbs that all share the same core firmware and connectivity module but just differ in shape or colour, you can likely assess them as a single product family. This dramatically cuts down on testing costs and administrative work.

The extended 36-month adaptation period and the future development of harmonised standards are also there to help. Once published, these standards will offer a “presumption of conformity,” giving SMEs a clear and straightforward checklist to follow to meet their legal obligations without having to interpret the law from scratch.

Regulus provides a streamlined software platform to help your team confidently navigate the Cyber Resilience Act. Our solution turns complex regulatory text into an actionable compliance plan, generating a tailored requirements matrix, technical file templates, and a step-by-step roadmap for 2025–2027. Gain clarity and reduce compliance costs by visiting https://goregulus.com.